Multiverse launches compressed OpenAI language model designed to cut memory needs and lower AI infrastructure costs

Spanish AI company Multiverse Computing has just dropped a game-changing release that’s sending shockwaves through the tech world. Meet HyperNova 60B 2602—a sleek, compressed version of OpenAI’s gpt-oss-120B, now available for free on Hugging Face. This isn’t just another model; it’s a revolutionary leap in AI efficiency that could redefine how we think about large language models.

The Big Breakthrough: Cutting Memory Needs in Half

HyperNova 60B 2602 slashes the original model’s memory requirements from a hefty 61GB down to just 32GB. But here’s the kicker: Multiverse Computing claims it retains near-parity tool-calling performance despite the 50% reduction in size. That’s right—half the memory, almost the same power. For developers working with tight budgets or energy constraints, this is a massive win.

Imagine running a model that once required heavy infrastructure on far less hardware. It’s like upgrading from a gas-guzzling SUV to a sleek electric car without losing any of the horsepower. This kind of efficiency could be a game-changer for startups, indie developers, and even large enterprises looking to optimize their AI deployments.

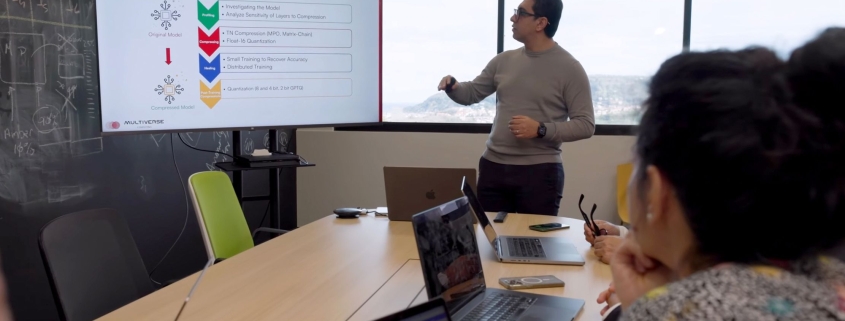

CompactifAI: The Secret Sauce

So, how did Multiverse pull this off? The answer lies in their proprietary CompactifAI technology. This isn’t your run-of-the-mill compression technique. CompactifAI restructures transformer weight matrices using quantum-inspired tensor networks, effectively rewriting the mathematical blueprint of the model.

Think of it like this: instead of removing bricks from a building, CompactifAI redesigns the internal framework so it uses far less material while preserving strength and functionality. It’s a smarter, more efficient way to compress AI models, and it’s setting a new standard in the industry.

Performance That Speaks for Itself

Multiverse isn’t just making bold claims—they’ve got the benchmarks to back it up. HyperNova 60B 2602 delivers a 5x improvement on Tau2-Bench and a 2x improvement on Terminal Bench Hard compared to its earlier compressed release. These tests measure tool use and coding workflows, not just simple text replies, so the results are particularly impressive.

In other words, this model isn’t just good at chatting—it’s built for real-world applications like coding, automation, and complex problem-solving. And it does all of this while running on significantly less hardware.

The Bigger Picture: Energy Efficiency and Sovereign AI

Multiverse’s approach ties into broader conversations about energy use and infrastructure limits in the AI industry. As models grow larger and more resource-intensive, the need for efficient solutions becomes increasingly urgent. CompactifAI offers a compelling alternative to simply building bigger models, aligning with European discussions around sovereign AI and sustainable tech.

This isn’t just about saving money or reducing hardware costs—it’s about making AI more accessible, sustainable, and scalable. And that’s a win for everyone.

How Does It Work?

At its core, CompactifAI leverages redundancy in trained transformer weight matrices. Large models are often overparameterized, meaning the same behaviors can be represented with fewer effective parameters. Instead of generic “zip-style” compression, CompactifAI uses a model-aware factorization (quantum-inspired tensor networks) to rewrite large matrices into a structured, smaller form while mitigating the accuracy trade-off.

The process is applied post-training, meaning the original model doesn’t need to be retrained, and no access to the original training data is required. This makes it a versatile and practical solution for a wide range of use cases.

Can It Be Applied to Every LLM?

CompactifAI works on transformer-based large language models, including dense foundation models, provided access to the model weights is available. It’s architecture-agnostic within the transformer family and doesn’t require changes to the model’s external behavior or APIs.

However, compression effectiveness depends on the level of redundancy in the model. Large, overparameterized models typically offer the greatest compression potential. The primary technical challenge is preserving model accuracy while achieving high compression ratios, which is addressed by carefully controlling tensor decomposition parameters.

What’s Next?

Looking ahead, Multiverse’s compression technique could be applied to upcoming LLMs, enabling devices like cars, phones, and laptops to run small or nano AI models preinstalled on their hardware. This could pave the way for a new era of edge computing, where powerful AI is no longer confined to the cloud.

And let’s not forget the hardware implications. CompactifAI is hardware-agnostic at the model level, meaning the resulting model can be deployed across cloud, on-prem, and edge environments without changing the model’s external interface. Inference speedups depend on what was limiting you before—if you were memory-bound, a smaller model often runs significantly faster and cheaper on the same hardware.

Why This Matters

HyperNova 60B 2602 isn’t just a technical achievement—it’s a statement. It proves that efficiency and performance don’t have to be mutually exclusive. By rethinking how we approach AI model design, Multiverse is pushing the boundaries of what’s possible.

This is the kind of innovation that could democratize access to AI, making it more affordable, sustainable, and scalable for everyone. And in a world where AI is becoming increasingly central to our lives, that’s a big deal.

Tags & Viral Phrases

- AI Compression

- HyperNova 60B 2602

- CompactifAI Technology

- Quantum-Inspired Tensor Networks

- Energy-Efficient AI

- Edge Computing Revolution

- Game-Changing AI Model

- OpenAI Competitor

- Sustainable AI

- AI for All

- Tech Innovation

- Future of AI

- AI Accessibility

- Model Compression

- AI Efficiency

- Multiverse Computing

- Hugging Face Release

- AI Breakthrough

- AI Scalability

- AI Sustainability

,

Leave a Reply

Want to join the discussion?Feel free to contribute!