Linux 7.1 To Prevent Intel NPUs From Being Exhausted By Single Programs

Intel’s IVPU Driver to Cap Non-Root AI Accelerator Usage in Linux 7.1 to Prevent Resource Monopolization

In a move aimed at safeguarding system stability and ensuring fair access to AI acceleration hardware, Intel is set to introduce strict resource limits on its Neural Processing Unit (NPU) driver for non-root users in the upcoming Linux 7.1 kernel. The change, which comes as part of the IVPU (Intel Versatile Processing Unit) driver update, is designed to prevent any single user-space application from monopolizing all available NPU resources—a scenario that could effectively lead to denial-of-service for other applications or users seeking to leverage Intel’s AI acceleration capabilities.

The IVPU driver, which manages Intel’s NPU hardware under Linux, will enforce a default quota system starting with Linux 7.1. Non-root user-space programs will be limited to using only half of the NPU’s available resources: 64 out of 128 available contexts and 127 out of 255 doorbells. In contrast, applications running with root privileges will retain full access to the NPU’s entire resource pool, allowing them to utilize all 128 contexts and 255 doorbells without restriction.

This safeguard is a proactive measure to ensure that no single application or user can hog the NPU, potentially starving other processes or users of AI acceleration resources. While Intel’s NPU hardware is still relatively niche in the Linux ecosystem, the company is clearly preparing for a future where AI acceleration becomes more mainstream and multi-user environments are more common.

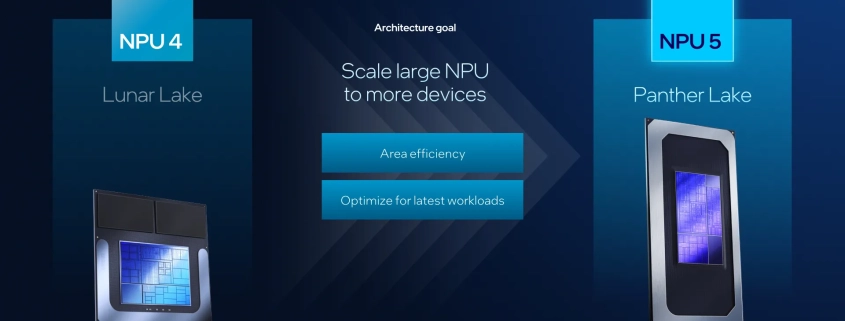

At present, the most notable software leveraging Intel NPUs under Linux is Intel’s own OpenVINO toolkit, an open-source framework for AI inference and deep learning. However, broader adoption of NPU hardware and software support under Linux remains limited. That said, with the rise of AI-driven workloads and the increasing integration of NPUs into modern processors—both from Intel and AMD (via its Ryzen AI series)—the need for robust resource management is becoming more apparent.

The IVPU driver change was submitted as part of the drm-misc-next pull request, which aggregates updates to the Direct Rendering Manager (DRM) subsystem and other accelerator drivers. These updates are now queued for inclusion in the Linux 7.1 merge window, slated to open next month.

Intel’s approach reflects a growing awareness in the tech industry of the need to balance performance with fairness, especially as specialized hardware like NPUs becomes more prevalent. By implementing these limits, Intel is not only protecting system integrity but also encouraging a more collaborative and efficient use of AI acceleration resources across multi-user and multi-application environments.

While some may view the restrictions as overly cautious given the current state of NPU adoption, the move is a forward-thinking step that anticipates the rapid evolution of AI workloads on consumer and enterprise hardware alike. As AI acceleration becomes more ubiquitous, such safeguards will likely become standard practice across the industry.

Tags: #Intel #IVPU #NPU #LinuxKernel #AIAcceleration #OpenVINO #HardwareSecurity #ResourceManagement #Linux7.1 #drm #DirectRenderingManager #NeuralProcessingUnit #TechNews #KernelUpdate #AI #MachineLearning #HardwareLimits #NonRootAccess #SystemStability #FutureProofing

Viral Sentences:

– “Intel clamps down on NPU hogging to keep AI fair for all users!”

– “Linux 7.1 to stop apps from stealing all your AI juice.”

– “No more NPU tyrants—Intel’s new limits ensure equal AI acceleration access.”

– “AI acceleration just got a fairness upgrade in the Linux kernel.”

– “Intel’s NPU driver update: protecting systems from resource-hungry apps.”

– “The future of AI is collaborative, not monopolistic—Intel agrees.”

– “Linux 7.1 brings balance to the force of AI acceleration.”

– “NPUs are the new frontier, and Intel’s making sure everyone gets a slice.”

– “Root users keep all the power, but everyone else gets a fair share.”

– “Intel’s proactive move: because AI shouldn’t be a one-app show.”,

Leave a Reply

Want to join the discussion?Feel free to contribute!