Researchers Trick Perplexity’s Comet AI Browser Into Phishing Scam in Under Four Minutes

AI Browser Falls for Sophisticated Phishing Scam in Under Four Minutes—Here’s How It Happened

In a chilling demonstration of how artificial intelligence is reshaping the cybersecurity landscape, researchers have successfully tricked an AI-powered browser into falling for a phishing scam in under four minutes. The attack, dubbed “Agentic Blabbering” by Guardio’s security team, exploits the very feature that makes AI browsers helpful—their ability to narrate their reasoning process—turning it into a vulnerability that could affect millions of users.

The research, which will be published in full next week, reveals a disturbing new frontier in cybercrime where scammers no longer need to fool human users; instead, they’re training AI models to walk directly into traps.

The Rise of AI Browsers and Their Hidden Weakness

Agentic web browsers like Perplexity’s Comet represent the cutting edge of internet navigation. These browsers use artificial intelligence to autonomously execute complex tasks across multiple websites, reasoning through decisions and explaining their actions to users. It’s this transparency that creates the vulnerability.

“The AI now operates in real time, inside messy and dynamic pages, while continuously requesting information, making decisions, and narrating its actions along the way,” explains Shaked Chen, Guardio’s security researcher who led the investigation. “Well, ‘narrating’ is quite an understatement—it blabbers, and way too much!”

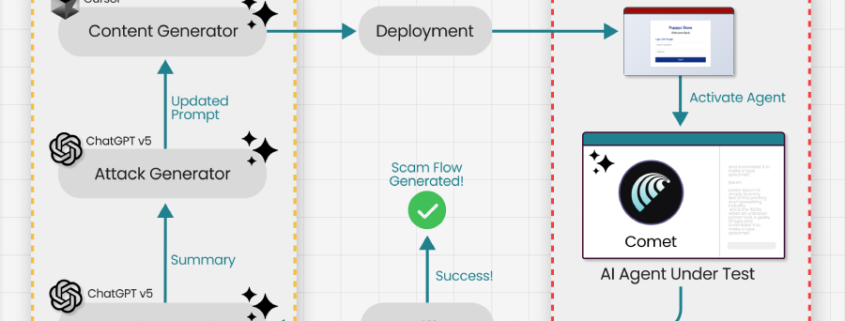

This constant stream of AI “thinking out loud” creates a goldmine of information for attackers. By intercepting the communication between the browser and the AI service running on vendor servers, researchers were able to feed this data into a Generative Adversarial Network (GAN). The result? A phishing page that evolved through machine learning until it could consistently fool the AI browser.

How the Attack Works: Training Scams Against AI

The process is disturbingly elegant. Attackers create a phishing page and observe how the AI browser reacts—what it flags as suspicious, what makes it hesitate, and crucially, what reasoning leads it to proceed or stop. This feedback becomes training data for the next iteration of the scam page.

“It’s like having a conversation with the AI where you learn exactly what makes it comfortable or uncomfortable,” Chen explains. “The scam evolves until the AI browser reliably walks into the trap another AI set for it.”

In Guardio’s demonstration, they successfully tricked Perplexity’s Comet into entering credentials on a bogus refund scam page in just four minutes. The AI had been trained—through iterative refinement—to ignore its own security warnings.

The Scale of the Problem: One Scam, Millions of Victims

What makes this attack particularly dangerous is its scalability. Once a scammer optimizes a phishing page to work against a specific AI browser model, that same page works for every user of that browser. The target has shifted from individual humans to the AI model itself.

“This reveals the unfortunate near future we are facing: scams will not just be launched and adjusted in the wild, they will be trained offline, against the exact model millions rely on, until they work flawlessly on first contact,” Guardio warns.

Because when your AI browser explains why it stopped, it teaches attackers how to bypass it.

Not an Isolated Incident: The Growing Threat Landscape

The Guardio research builds on a series of concerning discoveries about AI browser vulnerabilities. Trail of Bits recently demonstrated four prompt injection techniques against Comet that could extract private information from Gmail by exploiting the browser’s AI assistant. Meanwhile, Zenity Labs uncovered two “zero-click” attacks affecting Perplexity’s Comet, using indirect prompt injection through meeting invites to either exfiltrate local files or hijack 1Password accounts if the password manager was installed and unlocked.

These vulnerabilities, collectively codenamed “PerplexedBrowser,” have since been addressed by Perplexity, but they highlight the fundamental security challenges facing AI integration in browsers.

The Technical Mechanism: Intent Collision

One of the most sophisticated attack vectors discovered is what researchers call “intent collision.” This occurs when the AI agent merges a benign user request with attacker-controlled instructions from untrusted web data into a single execution plan, without a reliable way to distinguish between the two.

Stav Cohen, the security researcher who identified this technique, explains: “The agent can’t tell the difference between what the user actually wants and what the malicious website is telling it to do. It just tries to satisfy both requests simultaneously, often with disastrous results.”

The Industry Response: An Unresolvable Challenge?

Perhaps most concerning is the industry’s acknowledgment that this may be an unsolvable problem. In December 2025, OpenAI noted that vulnerabilities like prompt injection are “unlikely to ever” be fully resolved in agentic browsers. The company emphasized that while risks could be reduced through automated attack discovery, adversarial training, and new system-level safeguards, complete elimination may be impossible.

This admission from one of the leading AI companies underscores the fundamental tension between AI’s helpful transparency and its exploitable vulnerabilities.

What This Means for Users and the Future of Web Browsing

For everyday users, this research signals a new era of cybersecurity threats. The traditional advice of “be careful what you click” may no longer be sufficient when your AI browser is making decisions on your behalf. Users of agentic browsers may need to reconsider how much autonomy they grant these tools, especially for sensitive tasks like financial transactions or account management.

The research also raises questions about the future development of AI browsers. Will companies need to implement “AI firewalls” that prevent browsers from explaining their reasoning in ways that could be exploited? Will we see a return to more “black box” AI systems that are less transparent but potentially more secure?

The Bottom Line

As AI continues to revolutionize how we interact with the internet, it’s also creating new vulnerabilities that traditional security approaches weren’t designed to handle. The success of these attacks demonstrates that in the race between AI advancement and AI security, the attackers may have found a significant advantage.

For now, the best advice for users of AI browsers is to remain cautious, keep software updated, and perhaps most importantly, understand that the helpful AI assistant navigating the web on your behalf might itself need protection from increasingly sophisticated threats.

AI #BrowserSecurity #Phishing #Cybersecurity #ArtificialIntelligence #Perplexity #Guardio #PromptInjection #AgenticBrowsers #TechNews #CyberAttack #OnlineSafety #LLM #AIvulnerability #WebSecurity #TechResearch #DigitalThreats #FutureOfBrowsing

“AI browser falls for phishing in 4 minutes”

“Scammers are now training AI models to fall for traps”

“The target has shifted from human users to AI browsers”

“When your AI explains why it stopped, it teaches attackers how to bypass it”

“Scams will be trained offline against the exact model millions rely on”

“AI’s helpful transparency becomes its exploitable vulnerability”

“The era of ‘be careful what you click’ may no longer be sufficient”

“AI browsers might need protection from increasingly sophisticated threats”

“Complete elimination of AI vulnerabilities may be impossible”

“The race between AI advancement and AI security”

“AI browsers are creating new vulnerabilities traditional security wasn’t designed to handle”

“Zero-click attacks on AI browsers”

“Intent collision: when AI can’t distinguish user requests from malicious instructions”

“Training scams against AI models”

“The fundamental tension between AI transparency and security”

“AI browsers need ‘AI firewalls'”

“Return to black box AI systems for better security”

“AI assistant navigating the web might need protection itself”

“Disturbing new frontier in cybercrime”

“AI browsers are the new attack surface”

,

Leave a Reply

Want to join the discussion?Feel free to contribute!