Open-Source “GreenBoost” Driver Aims To Augment NVIDIA GPUs vRAM With System RAM & NVMe To Handle Larger LLMs

GreenBoost: NVIDIA’s CUDA Gets a Massive Memory Boost with Open-Source Innovation

In a groundbreaking development for AI enthusiasts and Linux users alike, an independent open-source developer has unveiled GreenBoost, a cutting-edge Linux kernel module designed to supercharge NVIDIA GPUs by expanding their memory capacity using system RAM and NVMe storage. This innovative solution promises to revolutionize how large AI models are run on consumer-grade hardware, making previously impossible workloads feasible without the need for high-end, expensive GPUs.

The Problem: Memory Constraints in AI Workloads

For years, AI developers and researchers have been hamstrung by the limited video memory (VRAM) on NVIDIA GPUs. Running large language models (LLMs) or complex AI workloads often requires more memory than even the most powerful consumer GPUs can provide. Traditional solutions, such as model quantization or offloading layers to system memory, come with significant trade-offs, including reduced performance or lower model quality. Enter GreenBoost, a game-changing solution that addresses these limitations head-on.

What is GreenBoost?

Developed by Ferran Duarri, an independent open-source developer, GreenBoost is a multi-tier GPU memory extension for Linux. Unlike NVIDIA’s official drivers, GreenBoost operates as a complementary kernel module, working alongside existing NVIDIA drivers to provide expanded memory access. The project is licensed under the GPLv2, ensuring its open-source nature and fostering community collaboration.

How Does GreenBoost Work?

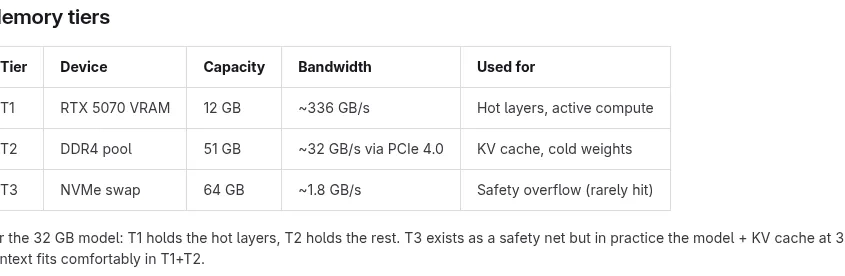

GreenBoost operates through a two-component system:

-

Kernel Module (

greenboost.ko): This module allocates pinned DDR4 pages using the buddy allocator, creating 2 MB compound pages for efficiency. These pages are exported as DMA-BUF file descriptors, which the GPU can import as CUDA external memory viacudaImportExternalMemory. From CUDA’s perspective, these pages appear as device-accessible memory, even though they reside in system RAM. The PCIe 4.0 x16 link handles data movement at speeds of up to 32 GB/s. A sysfs interface (/sys/class/greenboost/greenboost/pool_info) allows users to monitor memory usage in real-time, while a watchdog kernel thread monitors RAM and NVMe pressure to prevent system instability. -

CUDA Shim (

libgreenboost_cuda.so): This user-space library is injected viaLD_PRELOADand intercepts CUDA memory allocation functions such ascudaMalloc,cudaMallocAsync,cuMemAllocAsync,cudaFree, andcuMemFree. Small allocations (under 2 MB) are directed to VRAM, while larger ones are routed to the expanded memory pool. This transparent approach ensures compatibility with existing CUDA applications without requiring any modifications.

Real-World Impact

The inspiration for GreenBoost came from a practical challenge: running a 31.8 GB model (glm-4.7-flash:q8_0) on a GeForce RTX 5070 with just 12 GB of VRAM. Traditional methods, such as offloading layers to the GPU, resulted in poor token performance due to the lack of CUDA coherence in system memory. Quantization, while reducing memory requirements, often led to lower model quality. GreenBoost solves these issues by seamlessly integrating system RAM and NVMe storage into the GPU’s memory pool, enabling the execution of massive models without compromising performance or quality.

Why GreenBoost Matters

GreenBoost is more than just a technical achievement; it’s a testament to the power of open-source innovation. By leveraging the collective expertise of the Linux and AI communities, GreenBoost democratizes access to high-performance AI workloads, making them accessible to a broader audience. Whether you’re a researcher, developer, or hobbyist, GreenBoost empowers you to push the boundaries of what’s possible with your hardware.

Get Involved

The GreenBoost project is still in its experimental phase, but it’s already generating significant buzz in the tech community. If you’re interested in exploring this groundbreaking technology, you can find the source code on GitLab. The project’s open-source nature invites contributions, feedback, and collaboration, ensuring its continued evolution and improvement.

Conclusion

GreenBoost represents a significant leap forward in GPU memory management, offering a practical solution to one of the most pressing challenges in AI development. By expanding the memory capacity of NVIDIA GPUs through system RAM and NVMe storage, GreenBoost opens up new possibilities for running large-scale AI models on consumer hardware. As the project continues to evolve, it has the potential to reshape the landscape of AI development, making advanced workloads more accessible than ever before.

Tags and Viral Phrases:

NVIDIA GreenBoost, CUDA memory expansion, AI model acceleration, Linux kernel module, open-source innovation, VRAM boost, NVMe storage integration, system RAM utilization, AI workloads, GeForce RTX enhancement, memory management breakthrough, GPU performance optimization, AI democratization, tech revolution, cutting-edge AI, Linux GPU development, CUDA external memory, AI research empowerment, hardware limitations solved, next-gen AI computing.

,

Leave a Reply

Want to join the discussion?Feel free to contribute!