A Visual Introduction to Machine Learning

Decision Trees: How Machines Learn to Make Smart Choices

The Quest for Perfect Boundaries

In the world of machine learning, finding the perfect boundary between different categories is like searching for the holy grail. When we first proposed a 240-foot elevation boundary to distinguish between San Francisco and New York homes, we thought we had struck gold. But as any data scientist will tell you, our initial intuition was just the beginning of a much deeper journey.

Seeing the Data Differently

Sometimes, the key to solving a complex problem lies in changing your perspective. By transforming our elevation data into a histogram, we suddenly saw patterns that were invisible before. While New York’s highest home sits at approximately 240 feet, the majority of homes across both cities cluster at much lower elevations. This visualization technique is crucial because it reveals the true distribution of our data, showing us where the real boundaries might lie.

The Power of Decision Trees

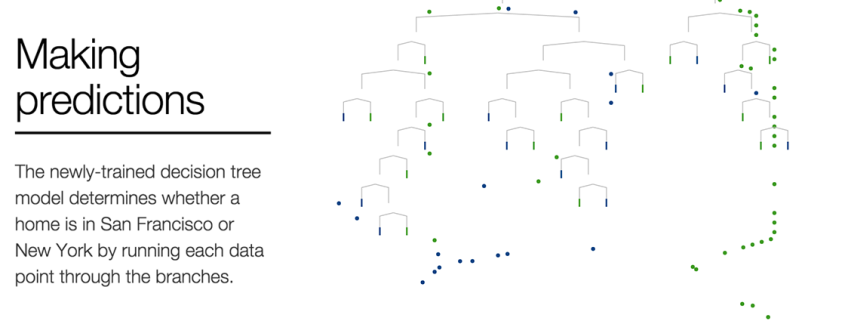

At the heart of this classification challenge lies the decision tree, a fundamental machine learning algorithm that mimics human decision-making through a series of if-then statements. Think of it as a flowchart where each decision point asks a simple question: “Is this home’s elevation above a certain threshold?” If yes, it goes one direction; if no, it goes another. These questions, called forks in machine learning terminology, are the building blocks of how computers learn to categorize complex data.

Understanding Forks and Split Points

Each fork in a decision tree creates a split point—a specific value that divides the data into two branches. Everything to the left of this point gets categorized one way, while everything to the right gets categorized differently. This split point is essentially the algorithm’s version of drawing a line in the sand, creating a boundary between different classifications.

The Tradeoff Dilemma

Here’s where things get interesting: choosing a split point always involves tradeoffs. Our initial 240-foot split, while intuitive, created a significant problem. Many San Francisco homes at lower elevations got incorrectly classified as New York homes. These misclassifications are what data scientists call false negatives—cases where the algorithm missed something it should have caught.

But the problem works both ways. If we adjust our split point to capture every single San Francisco home, we end up including many New York homes as well. These are called false positives—cases where the algorithm incorrectly identifies something as belonging to a category when it doesn’t.

Finding the Best Split

The holy grail of decision tree learning is finding the best split—the point where each resulting branch is as homogeneous as possible. This concept of “purity” in machine learning means that all the homes in one branch are very similar to each other and clearly different from those in the other branch.

Several mathematical methods exist to calculate the optimal split, each with its own strengths and weaknesses. The algorithm essentially tries different split points and evaluates which one creates the cleanest separation between categories. However, even the theoretically best split on a single feature rarely provides perfect separation. In our case, elevation alone couldn’t perfectly distinguish between San Francisco and New York homes.

The Magic of Recursion

When a single split isn’t enough, decision trees employ a powerful technique called recursion. This means the algorithm repeats the splitting process on each subset of data, creating a tree-like structure of decisions. It’s like solving a puzzle by breaking it down into smaller and smaller pieces until each piece is simple enough to understand.

The histograms on the left side of our visualization show this recursive process in action, displaying how the data distribution changes within each subset. What’s fascinating is that the best split point varies depending on which branch of the tree you’re examining.

Branch-Specific Decision Making

For homes at lower elevations, the algorithm might determine that price per square foot is the most important factor for the next decision. Perhaps it settles on a specific value—let’s say $X per square foot—as the threshold for the next if-then statement. Meanwhile, for higher elevation homes, the algorithm might decide that the total price is more relevant, choosing a different threshold—perhaps $Y total price—for that branch.

This adaptive approach is what makes decision trees so powerful. Rather than applying the same rules everywhere, the algorithm learns which factors matter most in different contexts, creating a nuanced and sophisticated classification system.

The Final Structure

As the algorithm continues to split and refine, it builds a complete decision tree that can classify new homes based on their features. Each path from the root to a leaf represents a series of decisions that lead to a final classification. The beauty of this approach is that it creates a model that’s not only accurate but also interpretable—you can actually follow the logic of how the algorithm arrived at its conclusion.

This journey from a simple elevation boundary to a complex decision tree illustrates a fundamental principle of machine learning: the best solutions often come from breaking down complex problems into simpler decisions and then combining those decisions in sophisticated ways. It’s a process that mirrors human reasoning while scaling to handle vast amounts of data with incredible precision.

Tags: decision trees, machine learning, data classification, elevation analysis, split points, false positives, false negatives, recursion, best split, histogram visualization, predictive modeling, algorithmic decision-making, data science, binary classification, feature importance

Viral Sentences:

- “The algorithm learns which factors matter most in different contexts”

- “Finding the perfect boundary between different categories is like searching for the holy grail”

- “This adaptive approach is what makes decision trees so powerful”

- “The beauty of this approach is that it creates a model that’s not only accurate but also interpretable”

- “It’s a process that mirrors human reasoning while scaling to handle vast amounts of data”

- “The best solutions often come from breaking down complex problems into simpler decisions”

- “Each path from the root to a leaf represents a series of decisions that lead to a final classification”

- “Several mathematical methods exist to calculate the optimal split”

- “The algorithm essentially tries different split points and evaluates which one creates the cleanest separation”

- “This repetition is called recursion, and it is a concept that appears frequently in training models”

,

Leave a Reply

Want to join the discussion?Feel free to contribute!