A roadmap for AI, if anyone will listen

The AI Safety Showdown: How a Bipartisan Coalition is Taking on Washington’s AI Wild West

In a stunning turn of events that’s sending shockwaves through Silicon Valley and Capitol Hill alike, a bipartisan coalition of tech experts, former government officials, and public figures has taken matters into their own hands. They’ve crafted what Washington has so far failed to produce: a comprehensive framework for responsible AI development that’s being hailed as the “Pro-Human Declaration.”

The timing couldn’t be more critical. Just weeks after the Pentagon’s dramatic standoff with Anthropic over AI control rights exposed the complete absence of coherent AI governance, this declaration arrives like a lifeboat in a regulatory storm. It’s not just another policy paper gathering dust in a congressional archive—it’s a battle plan for the future of human agency in an AI-dominated world.

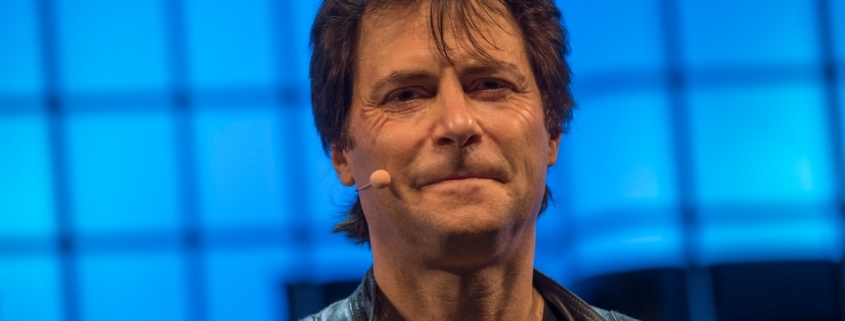

“We’re at a fork in the road,” explains Max Tegmark, the MIT physicist who helped spearhead this initiative. “One path leads to humans being gradually replaced—first as workers, then as decision-makers—while power concentrates in the hands of unaccountable institutions and their machines. The other path? That’s where AI becomes humanity’s greatest amplifier rather than our replacement.”

The declaration’s five pillars read like a manifesto for human survival in the digital age: keeping humans in charge, preventing power concentration, protecting the human experience, preserving individual liberty, and establishing real accountability for AI companies. But it’s the muscle behind these principles that’s truly revolutionary.

The document calls for an outright ban on superintelligence development until there’s scientific consensus on safety and genuine democratic buy-in. It demands mandatory kill switches on powerful systems and prohibits architectures capable of self-replication, autonomous self-improvement, or resistance to shutdown. In essence, it’s saying: if your AI can’t be turned off, it shouldn’t be turned on.

The urgency becomes painfully clear when you examine recent events. When Defense Secretary Pete Hegseth labeled Anthropic a “supply chain risk” after the company refused unlimited Pentagon access to its technology, it revealed something alarming: we’re making national security decisions about AI without any framework for what responsible development looks like. Hours later, OpenAI cut its own deal with the Defense Department—a contract that legal experts say will be nearly impossible to enforce meaningfully.

As Dean Ball, a senior fellow at the Foundation for American Innovation, told The New York Times, “This isn’t just some dispute over a contract. This is the first conversation we’ve had as a country about control over AI systems.”

Tegmark draws a compelling parallel that cuts through the technical jargon: “You never have to worry that some drug company is going to release a harmful drug before it’s been proven safe, because the FDA won’t allow it. Why should AI be any different?”

The coalition’s strategy for breaking through Washington’s gridlock is both clever and controversial: focus on child safety. The declaration calls for mandatory pre-deployment testing of AI products aimed at younger users, covering risks from increased suicidal ideation to emotional manipulation. It’s a calculated move that transforms an abstract technological debate into something visceral and immediate.

“If some creepy old man is texting an 11-year-old pretending to be a young girl and trying to persuade this boy to commit suicide, the guy can go to jail for that,” Tegmark argues. “We already have laws. It’s illegal. So why is it different if a machine does it?”

The beauty of this approach is its potential to create a domino effect. Once pre-release testing is established for children’s products, the scope naturally widens. “People will come along and be like—let’s add a few other requirements,” Tegmark predicts. “Maybe we should also test that this can’t help terrorists make bioweapons. Maybe we should test to make sure that superintelligence doesn’t have the ability to overthrow the U.S. government.”

Perhaps most remarkably, this declaration has united ideological opposites. Former Trump advisor Steve Bannon and Susan Rice, President Obama’s National Security Advisor, have both signed on, along with former Joint Chiefs Chairman Mike Mullen and progressive faith leaders. It’s a coalition that transcends traditional political divides because it’s addressing something more fundamental than policy differences.

“What they agree on, of course, is that they’re all human,” Tegmark observes. “If it’s going to come down to whether we want a future for humans or a future for machines, of course they’re going to be on the same side.”

The Pro-Human Declaration represents more than just policy recommendations—it’s a referendum on the kind of future we want to build. In an era where AI development is proceeding at breakneck speed with minimal oversight, this coalition is essentially saying: slow down, establish some ground rules, and let’s make sure we’re building something that serves humanity rather than replacing it.

The question now is whether Washington will listen, or whether we’ll continue stumbling blindly into an AI future we haven’t collectively chosen. One thing’s certain: the conversation has finally begun in earnest, and it’s about time.

#AIethics #AISafety #TechPolicy #HumanFirst #AIRegulation #BipartisanCoalition #AIAccountability #TechGovernance #Superintelligence #AIDevelopment #ChildSafety #TechReform #AIResponsibility #FutureOfWork #DigitalRights #AIControl #TechAccountability #AIStandards #HumanAgency #TechOversight

“AI safety can’t wait for Washington to act”

“The future of humanity hangs in the balance”

“Child safety could be the key that unlocks AI regulation”

“We’re building superintelligence without safety brakes”

“The AI arms race has no rules of engagement”

“Washington’s AI policy is a complete failure”

“Tech companies are making decisions that affect billions”

“The stakes couldn’t be higher: human agency vs. machine dominance”

“We need an FDA for artificial intelligence”

“The bipartisan coalition that could save humanity from AI”

“AI development is proceeding without guardrails”

“The moment of truth for responsible AI development”

“Washington’s inaction on AI is costing us dearly”

“The declaration that could change everything”

“Why AI companies should be legally accountable”

“The five pillars of human-centered AI”

“Superintelligence development needs a pause button”

“AI systems that can’t be turned off shouldn’t be turned on”

“The child safety argument that could transform AI policy”

“When politics ends and humanity begins”

“The coalition that proves we can still find common ground”

“AI safety testing: the new frontier of tech regulation”

“The framework Washington refused to create”

“Building AI that amplifies rather than replaces humanity”

“The urgency exposed by the Pentagon-Anthropic standoff”

“Why bipartisan agreement on AI matters more than ever”

“The regulatory vacuum that’s letting AI run wild”

“The declaration that’s forcing a national conversation”

“AI accountability: the missing piece of the puzzle”

“The child safety pressure point that could crack the impasse”

“Why AI companies need kill switches”

“The future of work in an AI-dominated world”

“The concentration of power in unaccountable institutions”

“Protecting human experience in the age of AI”

“Preserving individual liberty in a machine-driven future”

“The legal framework AI desperately needs”

“The scientific consensus we need before proceeding”

“The democratic buy-in that’s currently absent”

“The self-replicating AI architectures we must ban”

“The autonomous systems that threaten human control”

“The resistance to shutdown that must be prohibited”

“The child safety testing that should be mandatory”

“The suicidal ideation risks that demand attention”

“The mental health considerations we can’t ignore”

“The emotional manipulation dangers we must address”

“The bioweapon prevention that’s non-negotiable”

“The government overthrow prevention that’s essential”

“The common ground between Bannon and Rice”

“The humanity that unites ideological opposites”

“The future for humans vs. the future for machines”

“The referendum on the kind of future we want”

“The conversation that’s finally begun in earnest”

“The blind stumble into an AI future we haven’t chosen”

“The lifeboat in a regulatory storm”

“The battle plan for human agency in an AI world”

“The manifesto for human survival in the digital age”

“The five pillars that could save humanity”

“The muscle behind the principles that matters”

“The calculated move that transforms abstract debate”

“The domino effect of establishing testing standards”

“The fundamental issue that transcends policy differences”

“The urgency that makes the declaration’s timing perfect

“The regulatory framework that’s been missing for too long”

“The national security decisions being made without guidance”

“The contract that revealed our complete lack of AI governance”

“The analogy that cuts through technical jargon”

“The clever strategy for breaking through gridlock”

“The visceral transformation of technological debate”

“The principle that could widen scope almost inevitably”

“The coalition that proves unity is still possible”

“The referendum on the kind of future we want to build”

“The breakneck speed of AI development without oversight”

“The question of whether Washington will listen”

“The certainty that the conversation has finally begun,

Leave a Reply

Want to join the discussion?Feel free to contribute!