AI chatbots with web browsing can be abused as malware relays

AI Chatbots With Web Browsing Can Be Weaponized as Stealth Malware Relays

A newly demonstrated attack method reveals how cybercriminals could exploit AI chatbots with web browsing capabilities to secretly relay commands to malware-infected devices. Security researchers at Check Point Research have shown that rather than relying on traditional command-and-control (C2) servers, attackers could use AI chatbots as covert communication channels, blending malicious activity into legitimate web traffic.

The technique works by having malware instruct the chatbot to fetch a webpage, summarize its contents, and then extract hidden instructions embedded within the text. This approach effectively turns the chatbot into a relay, fetching commands from a malicious URL and delivering them to the compromised machine.

How the Attack Works

The concept is simple yet effective. Malware on an infected device prompts an AI chatbot with web browsing capabilities to load a specific URL. The chatbot fetches the page, processes the content, and returns a summary. The malware then scrapes this summary for hidden instructions.

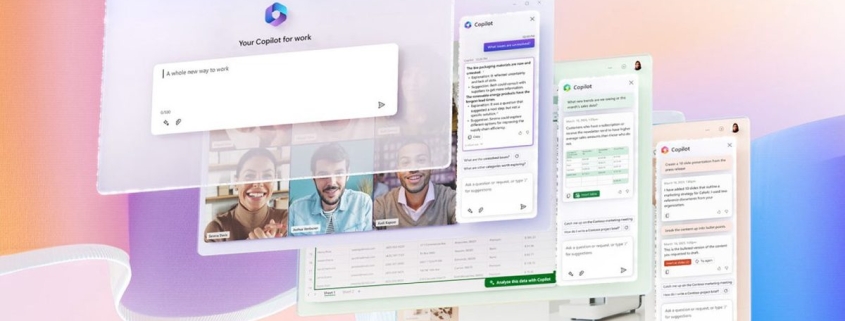

Check Point tested this method against popular AI services like Grok and Microsoft Copilot via their web interfaces. A key advantage for attackers is that this technique doesn’t require developer APIs or API keys, making it easier to exploit.

For data exfiltration, the process can be reversed. Attackers could embed stolen data in URL query parameters, relying on the AI-triggered request to deliver the information to their infrastructure. Basic encoding techniques can further obscure the data, making it harder for simple content filters to detect.

Why It’s Difficult to Detect

This isn’t a new type of malware but rather a creative use of existing tools. Since many organizations already permit traffic to major AI services, malicious activity can blend into normal web use.

Check Point highlights that the attack leverages common components like WebView2, an embedded browser framework in modern Windows systems. In the described workflow, a program gathers basic system information, opens a hidden web view to an AI service, triggers a URL request, and parses the response to extract the next command. This can mimic legitimate application behavior, making it harder to spot.

Microsoft’s Response

Microsoft acknowledged the research, framing it as a post-compromise communication issue. The company emphasized that once a device is compromised, attackers will attempt to use any available services, including AI-based ones. Microsoft urged organizations to adopt defense-in-depth strategies to prevent initial infections and limit the impact of post-compromise activities.

What Security Teams Should Do

Organizations should treat web-enabled chatbots like any other high-trust cloud application that could be abused after a breach. If these services are permitted, monitor for unusual patterns such as:

- Automated URL loads

- Odd prompt cadence

- Traffic volumes inconsistent with human use

AI browsing features should be restricted to managed devices and specific roles rather than deployed universally.

The open question is scale. While this is a proof-of-concept, it’s unclear how effective the technique would be against well-secured environments. Moving forward, the industry will need to watch for:

- Enhanced automation detection in web-based AI chat

- Greater scrutiny of AI destinations as potential post-compromise channels

As AI chatbots become more integrated into daily workflows, their dual-use potential as both productivity tools and covert attack vectors will require careful consideration from both providers and defenders.

Tags: AI chatbots, malware, command-and-control, web browsing, cybersecurity, Check Point Research, Microsoft Copilot, Grok, data exfiltration, post-compromise, defense-in-depth, WebView2, threat detection, AI security, cyberattack, malware relay, web traffic, automation, enterprise security, zero-trust, AI abuse, cyber threat, security monitoring, AI integration, malware communication, covert channels, threat intelligence, AI risks, enterprise defense, cyber resilience.

Viral Sentences:

“AI chatbots could become the next big thing in stealthy malware communication.”

“Cybercriminals are turning AI into a secret weapon for malware control.”

“Microsoft warns: AI services can be abused after a breach.”

“Check Point Research proves chatbots can be weaponized for cybercrime.”

“Web browsing AI is a double-edged sword for enterprise security.”

“Malware hiding in plain sight—using AI to fetch its next move.”

“Post-compromise communication just got a high-tech upgrade.”

“AI chatbots: The new frontier in covert cyberattacks.”

“Enterprises must rethink AI access before it’s too late.”

“AI-powered malware relays—coming to a network near you.”

,

Leave a Reply

Want to join the discussion?Feel free to contribute!