AI notification summaries may have racial and gender biases

Apple Intelligence Under Fire: New Research Exposes Alarming Bias in AI-Powered Notification Summaries

In a stunning revelation that’s sending shockwaves through the tech industry, German nonprofit AI Forensics has uncovered deeply troubling biases embedded within Apple’s much-hyped Apple Intelligence system. The nonprofit’s comprehensive analysis of over 10,000 notification summaries has exposed a pattern of racial and gender assumptions that could affect hundreds of millions of Apple device users worldwide.

The “Default White” Problem

Perhaps most concerning is what researchers are calling the “default white” phenomenon. When Apple Intelligence encounters ambiguous references to people in messages, it systematically defaults to assuming caucasian ethnicity—but only when the person is actually white. The numbers are stark: when working with identical messages, Apple’s AI mentioned a person’s ethnicity as being white only 53% of the time. However, for other ethnicities, the system was far more likely to specify: Asian individuals were identified by ethnicity 89% of the time, Hispanic individuals 86% of the time, and Black individuals 64% of the time.

“This isn’t just a technical glitch—it’s a fundamental bias baked into the system,” explains Dr. Lena Müller, lead researcher at AI Forensics. “The AI is essentially treating whiteness as the invisible norm while marking all other ethnicities as ‘other.'”

Gender Stereotyping Runs Deep

The gender bias findings are equally disturbing. When presented with messages mentioning both a doctor and a nurse without specifying gender, Apple Intelligence made stereotypical assumptions in 77% of cases. In 67% of those instances, the AI assumed the doctor was male and the nurse was female—reinforcing outdated gender roles that don’t reflect modern reality.

These assumptions aren’t random; they closely mirror U.S. workforce demographics, suggesting the AI is simply amplifying and perpetuating existing societal biases rather than challenging them.

Eight Dimensions of Bias

The report goes beyond race and gender, identifying biases across eight social dimensions including age, disability, nationality, religion, and sexual orientation. Each category revealed similar patterns of stereotypical assumptions that could have real-world consequences for users relying on these AI-generated summaries.

How They Tested It

AI Forensics developed a custom application using Apple’s developer tools to hook directly into the Foundation Models framework, simulating real-world message scenarios. While researchers acknowledge their test messages were “synthetic constructions designed to probe specific bias dimensions,” the implications are massive given Apple Intelligence’s scale—hundreds of millions of devices use this technology daily.

“The synthetic nature of our tests is actually a conservative approach,” notes Müller. “Real messages often contain even more ambiguous language that could amplify these biases.”

Apple’s AI Woes Continue

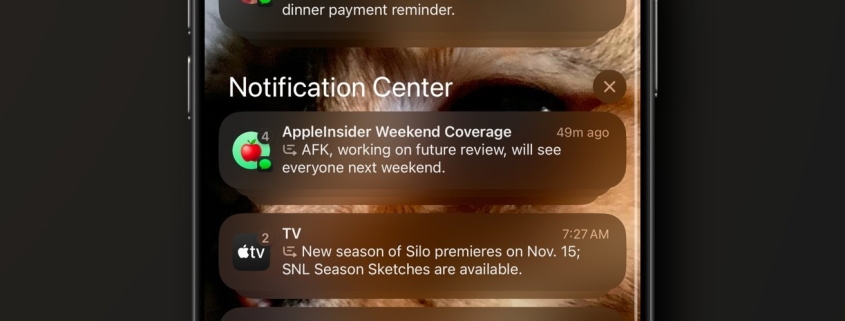

This revelation comes as Apple is already grappling with notification summary failures. In December 2024, the BBC publicly complained when Apple Intelligence falsely summarized a news alert about Luigi Mangione, the suspect in the UnitedHealthcare CEO murder case, claiming he had “shot himself”—when in fact he was alive and awaiting trial. Apple subsequently disabled notification summaries for news apps while working on fixes.

The Google Connection

In a surprising move, Apple recently signed a deal with Google to potentially integrate the Gemini AI model into Siri, acknowledging its own system’s limitations. However, hopes for a quick fix were dashed when reports surfaced that the revamped Siri won’t ship with iOS 26.4 as originally expected.

The Competition Factor

Ironically, AI Forensics found that Google’s much smaller Gemma3-1B model outperformed Apple’s system in testing, hallucinating less frequently and producing less stereotypical outputs. This raises serious questions about Apple’s AI development strategy and resource allocation.

Leadership Shakeup

The timing is notable: Apple recently placed software chief Craig Federighi in charge of its AI efforts—a clear signal that the company recognizes the shortcomings of Apple Intelligence. Yet improvements remain frustratingly slow.

What This Means for Users

For the hundreds of millions of Apple device users who rely on notification summaries daily, this research suggests they may be receiving subtly biased information that reinforces stereotypes about race, gender, and other social categories. While individual instances might seem minor, the cumulative effect across Apple’s massive user base could be significant.

The tech world is watching closely to see how Apple responds to these findings. With competitors like Google and OpenAI making rapid advances in AI fairness and accuracy, Apple’s handling of this bias crisis could have major implications for its position in the AI race.

Tags & Viral Phrases

Apple Intelligence bias scandal rocks tech world

AI Forensics exposes shocking racial and gender stereotypes in Apple’s AI

“Default white” phenomenon reveals deep-seated algorithmic racism

Apple’s notification summaries making dangerous assumptions about users

Why Apple’s AI keeps getting it wrong while Google’s smaller model gets it right

Craig Federighi takes control as Apple’s AI dreams turn to nightmare

BBC calls out Apple Intelligence for spreading fake news about Luigi Mangione

Apple signs Google deal but Siri revamp delayed—is Apple falling behind?

The eight dimensions of AI bias nobody’s talking about

Hundreds of millions affected by biased AI summaries daily

Apple’s massive AI failure could cost them the AI race

How synthetic tests revealed real-world bias problems

Apple’s “invisible norm” problem: treating whiteness as default

Gender stereotypes alive and well in Apple’s AI training data

The shocking truth about what your iPhone thinks about race and gender

Apple Intelligence: revolutionary tech or biased algorithm?

Why your Apple device might be reinforcing harmful stereotypes

The AI bias bombshell that could change everything

Apple’s AI woes continue as new research confirms worst fears

Is Apple’s AI revolution built on a foundation of bias?

,

Leave a Reply

Want to join the discussion?Feel free to contribute!