Anthropic Launches Claude Code Security for AI-Powered Vulnerability Scanning

Anthropic’s Claude Code Security: AI-Powered Vulnerability Scanning Revolutionizes DevSecOps

In a groundbreaking move that could reshape the landscape of software security, Anthropic has unveiled Claude Code Security, an innovative AI-powered feature that promises to transform how organizations identify and remediate vulnerabilities in their codebases. This development marks a significant milestone in the evolution of DevSecOps, potentially giving defenders a crucial advantage in the escalating AI-powered cybersecurity arms race.

The Dawn of AI-Native Security Scanning

Anthropic, the artificial intelligence research company behind the Claude family of large language models, has begun rolling out its new security feature for Claude Code, currently available in a limited research preview exclusively for Enterprise and Team customers. This strategic approach allows the company to refine the technology while gathering valuable feedback from sophisticated users before a broader release.

“Claude Code Security scans codebases for security vulnerabilities and suggests targeted software patches for human review,” Anthropic explained in their official announcement. “This allows teams to find and fix security issues that traditional methods often miss.”

The timing couldn’t be more critical. As AI capabilities continue to advance at breakneck speed, the cybersecurity landscape is experiencing a fundamental shift. What was once the exclusive domain of human security researchers is increasingly being automated, with AI agents demonstrating remarkable proficiency at detecting vulnerabilities that have historically eluded detection.

The AI Arms Race in Cybersecurity

The introduction of Claude Code Security comes amid growing concerns about the weaponization of AI in cybersecurity. Threat actors are already leveraging artificial intelligence to automate vulnerability discovery, dramatically accelerating their ability to identify and exploit weaknesses in software systems.

Anthropic’s move represents a calculated response to this emerging threat. By providing defenders with AI-powered tools that can match or exceed the capabilities of adversarial systems, the company aims to level the playing field and potentially tip the balance in favor of security teams.

“The same capabilities that allow AI to detect security vulnerabilities that have otherwise escaped human notice could be used by adversaries to uncover exploitable weaknesses more quickly than before,” Anthropic noted. “Claude Code Security is designed to counter this kind of AI-enabled attack by giving defenders an advantage and improving the security baseline.”

Beyond Traditional Static Analysis

What sets Claude Code Security apart from conventional security scanning tools is its sophisticated approach to vulnerability detection. Rather than relying solely on pattern matching and rule-based analysis, the system employs advanced reasoning capabilities that mirror how human security researchers approach code examination.

The AI agent doesn’t just scan for known vulnerability signatures; it understands how various components interact within the codebase, traces data flows throughout the application, and identifies potential security issues that might be missed by traditional static analysis tools. This holistic approach allows it to uncover subtle vulnerabilities that emerge from the complex interactions between different code modules.

“Claude Code Security goes beyond static analysis and scanning for known patterns by reasoning the codebase like a human security researcher,” Anthropic explained. “It understands how various components interact, traces data flows throughout the application, and flags vulnerabilities that may be missed by rule-based tools.”

Multi-Stage Verification and Confidence Scoring

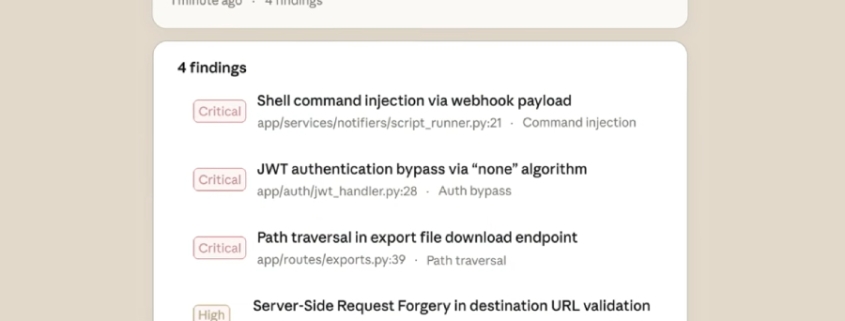

To address the perennial challenge of false positives in security scanning, Claude Code Security implements a rigorous multi-stage verification process. Each identified vulnerability undergoes re-analysis to filter out incorrect findings, significantly improving the signal-to-noise ratio for security teams.

The system also assigns severity ratings to each vulnerability, helping teams prioritize their remediation efforts based on the potential impact and exploitability of each issue. This intelligent prioritization ensures that security resources are allocated efficiently, focusing first on the most critical vulnerabilities.

Perhaps most importantly, the system provides confidence ratings for each finding. Given that security vulnerabilities often involve nuanced contextual factors that are difficult to assess from source code alone, these confidence scores help human analysts make informed decisions about which findings warrant immediate attention.

Human-in-the-Loop: The Critical Safety Net

Despite its advanced capabilities, Claude Code Security maintains a crucial human-in-the-loop (HITL) approach to decision-making. This design philosophy recognizes that while AI can dramatically accelerate vulnerability detection and provide valuable insights, the final judgment on security matters should remain with human experts.

“Nothing is applied without human approval,” Anthropic emphasized. “Claude Code Security identifies problems and suggests solutions, but developers always make the call.” This approach not only ensures that nuanced security decisions benefit from human expertise but also provides an essential safeguard against potential AI errors or misinterpretations.

The human review process is facilitated through an intuitive dashboard interface where security teams can examine each identified vulnerability, review the suggested patches, and approve or reject the proposed fixes. This collaborative workflow between AI and human analysts represents the best of both worlds: the speed and thoroughness of automated analysis combined with human judgment and contextual understanding.

Implications for the Future of Software Security

The introduction of Claude Code Security signals a broader trend toward AI-native security tools that can keep pace with the increasing complexity and scale of modern software systems. As applications become more distributed, interconnected, and reliant on third-party components, traditional security approaches are struggling to keep up.

AI-powered security scanning offers several compelling advantages:

Scale and Speed: AI can analyze massive codebases in minutes rather than hours or days, enabling continuous security assessment throughout the development lifecycle.

Pattern Recognition: Advanced AI models can identify subtle patterns and relationships that might indicate security vulnerabilities, even when they don’t match known vulnerability signatures.

Contextual Understanding: Unlike rule-based tools, AI can understand the broader context of code, including how different components interact and what security implications those interactions might have.

Adaptive Learning: As new vulnerability types emerge, AI systems can adapt and learn to recognize them without requiring manual rule updates.

Industry Impact and Competitive Landscape

Anthropic’s move into AI-powered security scanning positions the company in direct competition with other tech giants and security-focused AI startups. Microsoft has been integrating AI capabilities into its security tools, while Google’s Mandiant division has been exploring AI applications in threat detection and response.

The timing is particularly interesting given the recent advancements in AI capabilities across the industry. Just weeks before Anthropic’s announcement, Google’s AI system “Big Sleep” discovered five new zero-day vulnerabilities, demonstrating the growing capability of AI in security research. Similarly, Claude Opus 4.6 recently achieved remarkable results in vulnerability detection, finding over 500 high-severity issues in testing scenarios.

This competitive dynamic is likely to accelerate innovation in the space, ultimately benefiting security teams who will gain access to increasingly sophisticated tools for protecting their software systems.

Challenges and Considerations

While Claude Code Security represents a significant advancement, it’s important to acknowledge the challenges and limitations that remain. AI-powered security tools, no matter how sophisticated, are not infallible. They may still miss certain types of vulnerabilities, particularly those that require deep architectural understanding or involve complex business logic.

There are also important questions about the training data and methodologies used to develop these AI security capabilities. The effectiveness of the system depends heavily on the quality and diversity of the vulnerability data used for training, as well as the robustness of the verification processes employed to ensure accuracy.

Privacy and security concerns also arise when considering the use of AI to analyze proprietary codebases. Organizations will need to carefully evaluate the data handling practices and security measures implemented by providers like Anthropic to ensure that sensitive code is protected throughout the analysis process.

The Road Ahead

As Claude Code Security moves beyond its limited preview phase and becomes available to a broader audience, it will be fascinating to observe its real-world impact on software security practices. Early adopters in the enterprise space will likely provide valuable insights into how AI-powered security tools can be most effectively integrated into existing DevSecOps workflows.

The success of this technology could accelerate the broader adoption of AI in security operations, potentially leading to a new generation of security tools that combine the analytical power of artificial intelligence with the judgment and expertise of human security professionals.

For now, Anthropic’s announcement represents a significant milestone in the ongoing evolution of software security. By leveraging the same AI technologies that are being used to create new threats, the company is offering defenders a powerful new tool in the never-ending battle to secure our increasingly digital world.

Tags

AI #Cybersecurity #DevSecOps #ClaudeCode #Anthropic #VulnerabilityScanning #SoftwareSecurity #AITools #EnterpriseSecurity #CodeAnalysis

Viral Sentences

AI is rewriting the rules of cybersecurity, and Anthropic just dealt the defenders a winning hand

The future of vulnerability scanning isn’t just automated—it’s intelligent

When AI fights AI in the cybersecurity battlefield, who wins? The defenders, if Anthropic has anything to say about it

Claude Code Security: Because in the age of AI-powered attacks, your security tools need to be AI-powered too

The arms race is on, and Anthropic just gave the good guys a serious upgrade

Static analysis is dead. Long live AI-powered security scanning

Human-in-the-loop isn’t a limitation—it’s the secret sauce that makes AI security actually work

Your codebase just got a security researcher that never sleeps, never gets tired, and never misses a detail

The vulnerabilities that slip past human eyes? Claude Code Security sees them all

In the battle between AI attackers and AI defenders, the first major weapon has been deployed

,

Leave a Reply

Want to join the discussion?Feel free to contribute!