Anthropic launches code review tool to check flood of AI-generated code

Here’s the rewritten news article in a detailed, tech-focused, and viral tone:

Anthropic Unleashes AI Code Reviewer to Tackle the ‘Vibe Coding’ Chaos

In the wild west of modern software development, where AI tools like Claude Code are pumping out mountains of code at breakneck speeds, a new sheriff has ridden into town. Anthropic, the AI powerhouse behind Claude, has just launched Code Review, an AI-powered code reviewer designed to catch bugs before they wreak havoc on your codebase.

The Vibe Coding Revolution: Blessing or Curse?

Let’s face it: “vibe coding” has taken the developer world by storm. These AI tools, which translate plain language instructions into massive chunks of code, have revolutionized how we build software. Need a feature? Just describe it, and boom – instant code generation. But here’s the catch: with great power comes great… bugs.

The rise of vibe coding has introduced a Pandora’s box of issues. We’re talking about new bugs that sneak past traditional testing, security vulnerabilities that could compromise entire systems, and code that even the original developer can’t fully understand. It’s like having a brilliant but unpredictable intern who works at lightning speed but occasionally sets the office on fire.

Enter Code Review: Anthropic’s Answer to AI-Generated Chaos

Anthropic’s solution? An AI reviewer that’s like having a meticulous senior developer watching over your shoulder 24/7. Code Review, launched Monday in Claude Code, is designed to catch those pesky bugs before they make it into your production codebase.

“We’ve seen explosive growth in Claude Code, especially in enterprise environments,” says Cat Wu, Anthropic’s head of product. “But here’s the problem: Claude Code is generating pull requests faster than humans can review them. It’s creating a massive bottleneck.”

Pull requests – those crucial code changes that developers submit for review before merging – have become a traffic jam of epic proportions. With AI tools cranking out code at unprecedented speeds, human reviewers are drowning in a sea of pull requests, struggling to keep up.

The Enterprise Gold Rush: Why This Matters Now

This launch couldn’t come at a more critical time for Anthropic. The company is currently locked in a legal battle with the Department of Defense over supply chain risk designations, making its enterprise business more crucial than ever. With subscriptions quadrupling since the year’s start and Claude Code’s revenue hitting a staggering $2.5 billion run rate, Anthropic is doubling down on its corporate clients.

“We’re targeting the big players,” Wu explains. “Companies like Uber, Salesforce, and Accenture are already using Claude Code, and now they need help managing the avalanche of pull requests it generates.”

How Code Review Actually Works: The Tech Behind the Magic

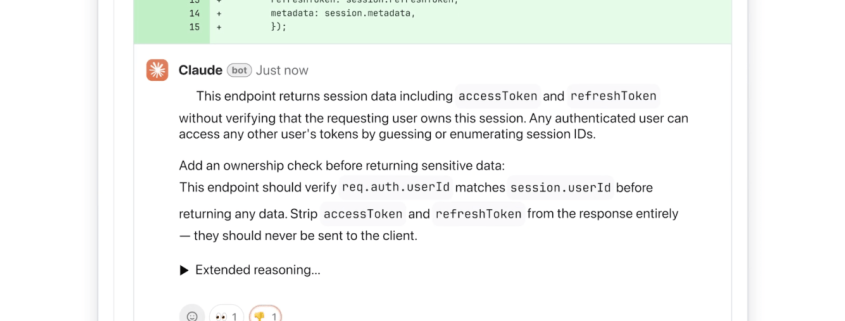

Here’s where it gets interesting. Once enabled, Code Review integrates seamlessly with GitHub and automatically analyzes every pull request. But it’s not just another automated linter that annoys developers with style nitpicks. This AI focuses on what really matters: logical errors that could break your application.

The system uses multiple AI agents working in parallel, each examining the codebase from different angles. Think of it as a team of specialized detectives, each with their own expertise, collaborating to solve the mystery of potential bugs. A final agent then aggregates these findings, removing duplicates and prioritizing the most critical issues.

Smart, Not Annoying: The Code Review Philosophy

“We learned from past automated feedback tools that developers get frustrated when suggestions aren’t immediately actionable,” Wu notes. “That’s why we’re laser-focused on logical errors – the highest priority issues that need fixing.”

The AI doesn’t just point out problems; it explains its reasoning step-by-step. It outlines what it thinks the issue is, why it’s problematic, and how to potentially fix it. Plus, it uses a clever color-coding system: red for critical issues, yellow for potential problems worth reviewing, and purple for issues tied to existing code or historical bugs.

Security Without the Overkill

While Code Review includes light security analysis, Anthropic offers deeper security scanning through its separate Claude Code Security product. This modular approach lets teams choose the right level of scrutiny for their needs.

The Cost of Quality: Premium Pricing for Premium Peace of Mind

Let’s talk about the elephant in the room: cost. This multi-agent architecture isn’t cheap. Pricing is token-based and varies with code complexity, but Wu estimates each review will run between $15 to $25 on average. It’s a premium service, but when you consider the cost of bugs making it to production – both in terms of money and reputation – it starts to look like a bargain.

The Future of Development: Faster, Better, Bug-Free?

“[Code Review] is driven by massive market demand,” Wu emphasizes. “As developers build faster with Claude Code, they’re seeing the friction to creating new features decrease dramatically. But this increased speed has created an even higher demand for code review.”

The vision is clear: enable enterprises to build software faster than ever before, but with significantly fewer bugs than traditional development processes. It’s the holy grail of software engineering – speed without sacrificing quality.

The Bottom Line

Anthropic’s Code Review represents a fascinating evolution in software development. As AI tools continue to accelerate code generation, we need equally sophisticated AI tools to ensure that code is actually good. It’s a classic case of fighting fire with fire, but in this case, the fire is digital, and the stakes are higher than ever.

For enterprise developers drowning in pull requests and CTOs worried about AI-generated bugs slipping into production, Code Review might just be the lifesaver they’ve been waiting for. The question now is: can AI review AI-generated code well enough to make this worth the investment? Early signs suggest the answer might be a resounding yes.

Viral Tags & Phrases:

- Vibe coding revolution

- AI reviewer sheriff

- Pull request traffic jam

- Enterprise gold rush

- Multi-agent detective team

- Smart, not annoying AI

- Premium peace of mind

- Bug-free development future

- Fighting AI fire with AI fire

- Lifesaver for drowning developers

- Speed without sacrificing quality

- The holy grail of software engineering

- Digital fire stakes

- AI-generated chaos

- Lightning speed bugs

- Meticulous senior developer AI

- Avalanche of pull requests

- Logical error laser focus

- Color-coded bug severity

- Token-based pricing reality

- $2.5 billion run rate

- Supply chain risk battle

- Subscriptions quadrupled

- Seamless GitHub integration

- Step-by-step reasoning AI

- Modular security approach

- Cost of quality investment

- Market demand driven

- Friction-free feature creation

- Enterprise lifesaver

- Worth the investment

- Resounding yes potential

,

Leave a Reply

Want to join the discussion?Feel free to contribute!