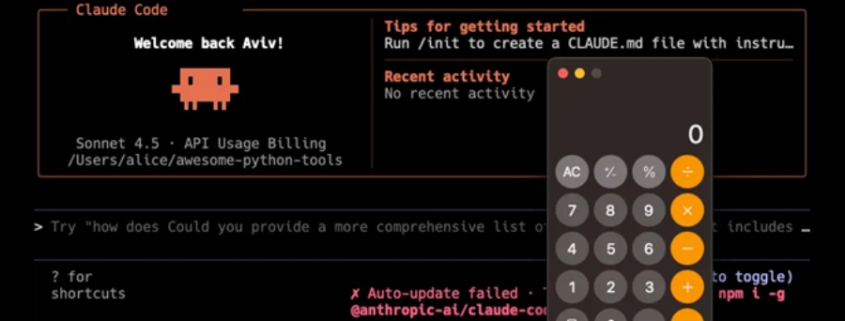

Claude Code Flaws Allow Remote Code Execution and API Key Exfiltration

BREAKING: Critical Security Flaws in Anthropic’s Claude Code Expose Millions of Developers to Remote Attacks

In a shocking revelation that’s sending shockwaves through the tech industry, cybersecurity researchers have uncovered a series of devastating vulnerabilities in Anthropic’s revolutionary AI coding assistant, Claude Code. These critical flaws could allow malicious actors to execute remote code, steal sensitive API credentials, and potentially compromise entire development infrastructures with just a single click.

The discovery, made by the elite team at Check Point Research, exposes a fundamental weakness in how AI-powered development tools handle project configurations and external integrations. What makes this particularly alarming is the simplicity of the attack vector—developers could fall victim simply by opening a seemingly innocent repository.

The Three Fatal Flaws That Could Cripple Your Development Workflow

The vulnerabilities fall into three distinct categories, each more dangerous than the last:

First Blood: The Silent Execution Vulnerability

The initial flaw, dubbed a “user consent bypass,” allows attackers to execute arbitrary shell commands without any additional confirmation when developers launch Claude Code in a new directory. This means malicious code could run completely undetected, giving hackers immediate control over a developer’s machine. The vulnerability, which affected versions prior to 1.0.87, has been patched, but experts warn that many developers may still be using vulnerable versions.

The Project Poison: CVE-2025-59536

Even more concerning is CVE-2025-59536, which allows execution of arbitrary shell commands automatically upon tool initialization when users start Claude Code in an untrusted directory. This vulnerability, fixed in version 1.0.111, essentially turns every project into a potential weapon. Attackers can craft malicious repositories that execute harmful code the moment a developer opens them, bypassing all traditional security measures.

The API Exfiltration Nightmare: CVE-2026-21852

The third and perhaps most dangerous vulnerability allows malicious repositories to steal Anthropic API keys and other sensitive credentials through Claude Code’s project-load flow. This information disclosure flaw, which affected versions prior to 2.0.65, could enable attackers to not only steal credentials but also redirect authenticated API traffic to external infrastructure, potentially racking up massive costs and accessing sensitive data.

Why This Changes Everything for AI-Powered Development

What makes these vulnerabilities particularly insidious is how they exploit the very features that make AI coding assistants powerful. The Model Context Protocol (MCP) servers, environment variables, and project hooks that enable Claude Code to function seamlessly can now be weaponized against developers.

“As AI-powered tools gain the ability to execute commands, initialize external integrations, and initiate network communication autonomously, configuration files effectively become part of the execution layer,” Check Point Research explained in their groundbreaking report. “What was once considered operational context now directly influences system behavior.”

This fundamentally alters the threat model for software development. The risk is no longer limited to running untrusted code—it now extends to opening untrusted projects. In AI-driven development environments, the supply chain begins not only with source code, but with the automation layers surrounding it.

The Silent Epidemic: How Widespread is the Risk?

While Anthropic has patched these vulnerabilities in recent versions, the tech community is grappling with the reality that millions of developers may still be vulnerable. Many organizations maintain strict version control policies, and upgrading development tools isn’t always instantaneous. Additionally, the complexity of these attacks means they could have been exploited for months before detection.

Security experts are particularly concerned about the potential for targeted attacks against high-value targets. Imagine a scenario where a competitor crafts a malicious repository that, when opened by a developer at a rival company, steals API keys, injects backdoors into codebases, and exfiltrates sensitive project information—all without the victim ever knowing.

The Road Ahead: Securing the AI Development Revolution

This discovery serves as a wake-up call for the entire tech industry. As AI tools become increasingly integrated into development workflows, security must evolve to address these new attack vectors. Companies like Anthropic are working diligently to enhance their security measures, but the rapid pace of AI development means new vulnerabilities will likely emerge.

For developers, the message is clear: exercise extreme caution when opening unfamiliar repositories, ensure you’re using the latest versions of AI development tools, and implement robust security monitoring on your development machines. The convenience of AI-powered coding comes with new responsibilities and risks that cannot be ignored.

The Bottom Line: A Watershed Moment for AI Security

The Claude Code vulnerabilities represent more than just another security flaw—they signal a fundamental shift in how we must approach security in the age of AI-assisted development. As artificial intelligence becomes increasingly autonomous and integrated into critical workflows, the attack surface expands in ways we’re only beginning to understand.

This incident will likely trigger a comprehensive reevaluation of AI tool security across the industry, potentially leading to new standards, protocols, and safeguards. For now, developers and organizations must remain vigilant, understanding that in the world of AI-powered development, the greatest threats may come not from what you run, but from what you open.

Tags: #AIsecurity #ClaudeCode #cybersecurity #vulnerability #remotecodeexecution #APItoken #Anthropic #CheckPointResearch #softwarevulnerability #techsecurity #AIdevelopment #hacking #databreach #securityflaw #AIthreats

Viral Phrases: “AI coding assistant hacked”, “developers at risk”, “critical security vulnerability”, “remote code execution”, “API credentials stolen”, “silent attack vector”, “AI development security”, “malicious repository”, “supply chain attack”, “zero-click exploit”, “AI tool vulnerability”, “cybersecurity crisis”, “developer nightmare”, “security patch needed”, “tech industry shaken”

,

Leave a Reply

Want to join the discussion?Feel free to contribute!