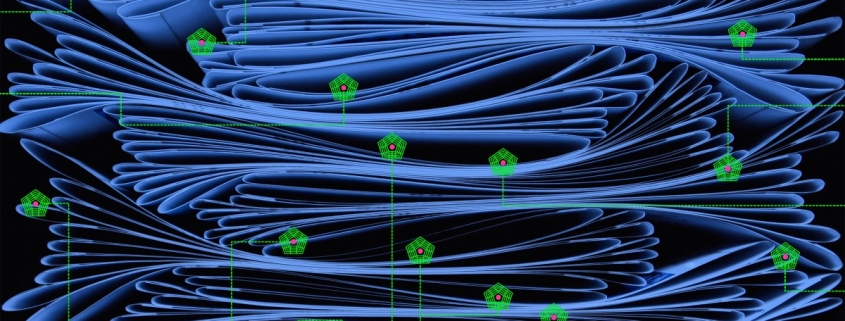

Is the Pentagon allowed to surveil Americans with AI?

Pentagon’s AI Surveillance Push Sparks Privacy Alarm as Tech Law Lags Behind

In an era where digital footprints are as revealing as fingerprints, the U.S. Department of Defense’s expanding use of artificial intelligence for data analysis is raising urgent questions about the boundaries of surveillance, privacy, and the law. While the Pentagon insists its activities are within legal bounds, experts warn that the rapid evolution of AI technology has outpaced the regulatory framework meant to protect Americans’ civil liberties.

The Fourth Amendment, a cornerstone of American privacy rights, was crafted in an age when “search and seizure” meant physically entering someone’s home. Fast forward to the digital age, and the landscape has transformed entirely. Laws like the Foreign Intelligence Surveillance Act (FISA) of 1978 and the Electronic Communications Privacy Act (ECPA) of 1986 were designed for wiretaps and intercepted emails—not the vast, intricate webs of online data generated by billions of users every day.

Today, every click, location check-in, and online purchase contributes to a sprawling digital dossier. When combined with advanced AI, this data can be mined for patterns, inferences, and detailed profiles—often without individuals ever realizing the extent of the surveillance. “AI can aggregate individual pieces of information, none of which is by itself sensitive, and therefore none of which by itself is regulated, and it can give the government a lot of powers that the government didn’t have before,” explains Andrew Rozenshtein, a legal scholar specializing in surveillance and technology.

The implications are profound. AI doesn’t just process data—it synthesizes it, drawing connections that were previously invisible. This capability enables large-scale monitoring that would have been impossible with older technologies. And as long as the government collects this information lawfully, current statutes give it broad latitude to use it however it sees fit, including feeding it into AI systems for analysis.

The disconnect between law and technology is stark. “The law has not caught up with technological reality,” Rozenshtein notes. While the legal framework was built for an analog world, the digital age has ushered in a new era of surveillance—one where privacy protections are increasingly porous.

Yet, the Pentagon maintains that its use of AI is driven by legitimate national security needs. Loren Voss, a former military intelligence officer, emphasizes that intelligence gathering on Americans is limited to specific, high-stakes missions—such as investigating individuals working for foreign adversaries or planning international terrorist acts. But even targeted operations can sometimes expand into broader data collection, a reality that makes many Americans uneasy.

“This kind of collection does make people nervous,” Voss admits. The tension between security and privacy is not new, but AI’s capabilities have intensified the debate.

In response to growing concerns, OpenAI—whose technology is among those being considered for government contracts—has updated its terms of service. The company now explicitly prohibits the use of its AI systems for “domestic surveillance of U.S. persons and nationals,” aligning with existing legal restrictions. The amendment clarifies that this includes “deliberate tracking, surveillance or monitoring of U.S. persons or nationals, including through the procurement or use of commercially acquired personal or identifiable information.”

However, critics argue that these safeguards may be more symbolic than substantive. OpenAI’s contract still allows the Pentagon to use its AI for “all lawful purposes,” a clause that could encompass the collection and analysis of sensitive personal data. “OpenAI can say whatever it wants in its agreement… but the Pentagon’s gonna use the tech for what it perceives to be lawful,” says Jessica Tillipman, a law professor at the George Washington University Law School. “Most of the time, companies are not going to be able to stop the Pentagon from doing anything.”

This reality underscores a fundamental challenge: in the realm of national security, the government’s interpretation of what is “lawful” can be broad, and tech companies may have limited ability to enforce stricter ethical boundaries once a contract is signed.

As AI continues to evolve, the debate over surveillance, privacy, and the role of technology in national security is only set to intensify. For now, the law remains a step behind the technology—and the stakes for American privacy have never been higher.

Tags: AI surveillance, Pentagon, privacy rights, Fourth Amendment, data collection, national security, OpenAI, government contracts, digital privacy, surveillance technology, lawful use, tech regulation, civil liberties, AI ethics, domestic surveillance, electronic privacy, FISA, ECPA, big data, AI law, privacy concerns, surveillance state, tech law, data profiling, intelligence gathering

Viral Sentences:

- “AI can give the government powers it didn’t have before.”

- “The law has not caught up with technological reality.”

- “OpenAI can say whatever it wants—but the Pentagon’s gonna use the tech for what it perceives to be lawful.”

- “This kind of collection does make people nervous.”

- “The stakes for American privacy have never been higher.”

,

Leave a Reply

Want to join the discussion?Feel free to contribute!