Meta Developed 4 New Chips to Power Its AI and Recommendation Systems

Meta Unveils Four New Custom AI Chips in Ambitious Push to Dominate the Generative AI Race

In a bold and unprecedented move that signals Meta’s escalating commitment to artificial intelligence, the social media behemoth has announced the development of four groundbreaking new computer chips designed to power its rapidly expanding suite of AI features and content ranking systems across Facebook, Instagram, and other platforms. This revelation marks a pivotal moment in Meta’s evolution from social networking giant to AI powerhouse, as the company takes full control of its silicon destiny in a bid to outpace competitors and redefine the boundaries of what’s possible in machine learning and content delivery.

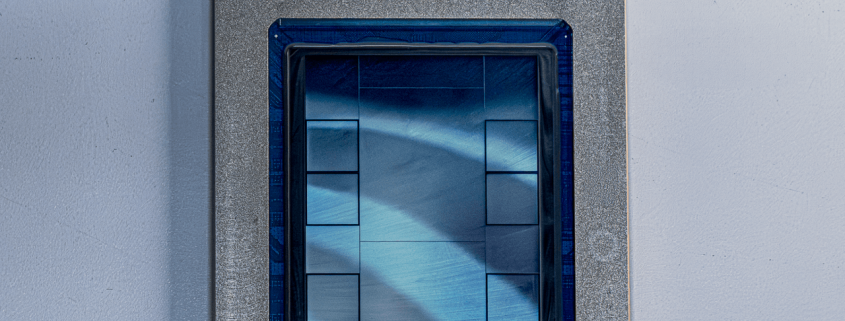

The new chips, collectively part of Meta’s proprietary MTIA (Meta Training and Inference Accelerators) lineup, represent years of secretive development and strategic partnerships with industry titans. Meta has teamed up with Broadcom, the semiconductor design powerhouse, to create these cutting-edge processors built on the open-source RISC-V architecture—a move that underscores the company’s commitment to flexibility and innovation in hardware design. Manufacturing these sophisticated chips is Taiwan Semiconductor Manufacturing Company (TSMC), the undisputed global leader in advanced semiconductor fabrication, ensuring that Meta’s silicon ambitions are backed by the most advanced production capabilities available.

What makes this announcement truly remarkable is the blistering pace of development. While the MTIA 300 is already in production and being deployed across Meta’s data centers, the other three chips—MTIA 400, 450, and 500—are scheduled to ship between early 2026 and late 2027. This aggressive timeline is virtually unheard of in the traditional chip industry, where development cycles typically span several years. Meta’s ability to compress this timeline speaks volumes about the company’s engineering prowess and its laser focus on staying ahead in the AI arms race.

According to YJ Song, Meta’s Vice President of Engineering, the company’s iterative approach to chip development is a direct response to the breakneck speed at which AI models are evolving. “AI workloads are changing so rapidly that by the time traditional chips reach production, the models they were designed for may already be obsolete,” Song explained in a detailed blog post. “Our modular chiplet architecture allows us to rapidly incorporate the latest AI insights and hardware innovations with each generation, ensuring our infrastructure remains state-of-the-art.”

The MTIA 300 chip, already deployed in Meta’s data centers, is specifically optimized for training the complex algorithms that power content ranking and recommendation systems. These are the invisible engines that decide what billions of users see in their Facebook and Instagram feeds every day—determining everything from which friend’s vacation photos appear first to which viral videos keep users scrolling for hours. By developing custom silicon for these critical workloads, Meta aims to dramatically improve the efficiency and effectiveness of its content delivery systems.

The remaining three chips in the lineup are designed with inference workloads in mind—the computationally intensive process of running trained AI models to generate outputs like text, images, and eventually, perhaps, entire virtual environments. The MTIA 400, which Meta claims delivers performance “competitive with leading commercial products,” has already undergone testing and is expected to begin shipping to data centers imminently. This chip represents Meta’s answer to the dominance of established players like NVIDIA in the AI hardware space.

Building on the MTIA 400’s foundation, the MTIA 450 will feature double the high-bandwidth memory, a critical specification for handling the massive datasets required by modern AI models. Scheduled for release in early 2027, this chip will enable Meta to push the boundaries of what’s possible in AI inference. The MTIA 500, slated for late 2027, promises even more dramatic improvements with additional memory capacity and “innovations in low-precision data” that could revolutionize how AI models process information, potentially enabling faster, more efficient AI operations at unprecedented scales.

Meta’s aggressive push into custom silicon is part of a broader industry trend, as software companies recognize that off-the-shelf hardware often can’t keep pace with their specialized AI needs. OpenAI, for instance, recently announced its own partnership with Broadcom to develop custom AI accelerators, following a path remarkably similar to Meta’s. This convergence suggests that the future of AI may be defined not just by algorithmic breakthroughs, but by who controls the hardware that powers these systems.

The timing of Meta’s announcement is particularly noteworthy, coming on the heels of reports that the company was scaling back some of its more ambitious internal chip design efforts. By unveiling this comprehensive MTIA roadmap, Meta appears eager to dispel any narrative of retreat and instead project an image of relentless forward momentum. However, the reality remains that developing cutting-edge silicon is extraordinarily expensive and technically challenging, which means Meta will likely continue relying heavily on established chip manufacturers like NVIDIA for the foreseeable future.

This dual strategy is already evident in Meta’s recent massive chip purchases. The company’s announcement of the new MTIA lineup came hot on the heels of multibillion-dollar deals with NVIDIA and AMD, and it has even signed agreements to rent AI chips from Google. This approach—developing custom silicon for specialized workloads while simultaneously securing vast quantities of general-purpose AI hardware from market leaders—represents a pragmatic recognition of the current state of the semiconductor industry.

The implications of Meta’s chip strategy extend far beyond the company’s own data centers. As one of the world’s largest technology companies with billions of users across its platforms, Meta’s hardware decisions have the potential to influence the entire AI ecosystem. By optimizing its infrastructure for specific AI workloads, Meta could achieve significant performance advantages over competitors still relying on general-purpose hardware. Moreover, the company’s willingness to invest billions in custom silicon development could accelerate innovation across the entire semiconductor industry, potentially leading to new architectural breakthroughs that benefit the broader tech community.

Looking ahead, Meta’s chip ambitions appear boundless. Industry analysts speculate that the company’s long-term vision may include not just data center processors, but specialized AI chips for augmented and virtual reality devices, autonomous systems, and perhaps even quantum computing applications. As Meta continues to blur the lines between social networking, artificial intelligence, and the metaverse, its control over the underlying hardware infrastructure could prove to be one of its most valuable strategic assets.

For now, the tech world watches with bated breath as Meta’s MTIA chips begin their journey from design documents to deployed silicon, potentially reshaping the landscape of artificial intelligence and content delivery in ways we’re only beginning to imagine. One thing is certain: in the high-stakes game of AI development, Meta has just raised the ante in a major way.

Tags: Meta AI chips, MTIA, custom silicon, generative AI, content ranking, Broadcom partnership, RISC-V architecture, TSMC manufacturing, AI hardware, data center optimization, Meta Training and Inference Accelerators, AI inference, chip development timeline, modular chiplet architecture, high-bandwidth memory, low-precision data, NVIDIA competition, AMD partnership, Google cloud chips, AI arms race, semiconductor industry, metaverse infrastructure, content recommendation algorithms, AI model training, inference processing, custom AI accelerators, OpenAI chip development, quantum computing potential, AR/VR hardware, autonomous systems, tech industry disruption

Viral Phrases: “Meta’s silicon revolution,” “AI hardware arms race heats up,” “The chip that could change everything,” “Meta’s secret weapon in the AI wars,” “From social media to silicon powerhouse,” “The $100 billion bet on custom chips,” “Meta’s moonshot: Owning the AI infrastructure stack,” “The chipmaker that wasn’t supposed to exist,” “When a social media company builds its own brain,” “The end of NVIDIA’s AI monopoly?” “Meta’s chip strategy: Buy, partner, or build?” “The chip that thinks like Facebook,” “AI’s new power players: Software companies going hardware,” “Meta’s chip reveal: Bigger than you think,” “The $7 trillion semiconductor gamble,” “Meta’s chip roadmap: A timeline that defies logic,” “The chip that could make Instagram faster than thought,” “Meta’s silicon secret: Why they’re winning the AI race,” “The chip that Facebook built,” “Meta’s chip announcement: The most important tech news of the year?”

,

Leave a Reply

Want to join the discussion?Feel free to contribute!