microgpt

microgpt: A 200-Line GPT in Pure Python

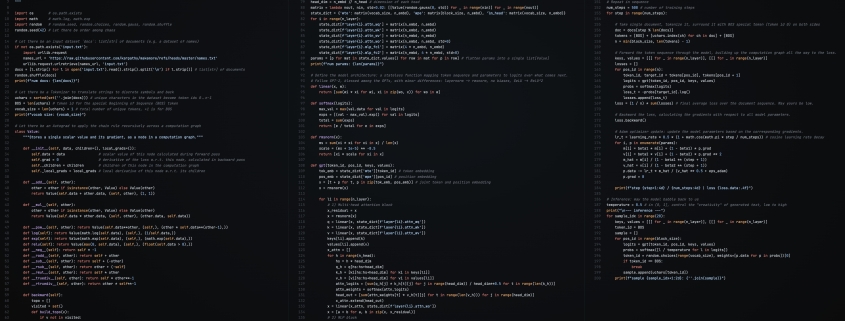

A single-file, dependency-free implementation of a GPT model that trains and generates text from scratch.

In the world of AI, large language models like GPT-4 and ChatGPT are often seen as black boxes—massive systems trained on vast datasets with billions of parameters. But what if you could strip it all down to the bare essentials? That’s exactly what microgpt does.

This project is a single Python file, just 200 lines of code, with no external dependencies. It contains the full algorithmic content of a GPT model: a dataset of documents, a tokenizer, an autograd engine, a GPT-2-like neural network architecture, the Adam optimizer, a training loop, and an inference loop. Everything else is just efficiency.

The beauty of microgpt lies in its simplicity. It’s the culmination of years of work on projects like micrograd, makemore, and nanogpt, all aimed at distilling large language models (LLMs) to their core components. And yes, it’s beautiful. 🥹

Where to Find It

You can find the code on GitHub or run it directly in a Google Colab notebook. The script is self-contained and runs in under a minute on a MacBook.

How It Works

Dataset

The fuel of large language models is a stream of text data. In microgpt, we use a simple dataset of 32,000 names, one per line. The goal is to train the model to learn the patterns in the data and generate new, plausible-sounding names.

Here’s a preview of what the model generates after training:

sample 1: kamon

sample 2: ann

sample 3: karai

sample 4: jaire

sample 5: vialan

sample 6: karia

sample 7: yeran

sample 8: anna

sample 9: areli

sample 10: kaina

sample 11: konna

sample 12: keylen

sample 13: liole

sample 14: alerin

sample 15: earan

sample 16: lenne

sample 17: kana

sample 18: lara

sample 19: alela

sample 20: anton

From the model’s perspective, your conversation with ChatGPT is just a “document.” When you initialize the document with your prompt, the model’s response is just a statistical document completion.

Tokenizer

Neural networks work with numbers, not characters, so we need a way to convert text into a sequence of integer token IDs and back. In microgpt, we use a simple tokenizer that assigns one integer to each unique character in the dataset. We also add a special BOS (Beginning of Sequence) token to mark the start and end of a document.

For example, the name “emma” becomes [BOS, e, m, m, a, BOS]. The model learns that BOS initiates a new name and that another BOS ends it.

Autograd

Training a neural network requires gradients: for each parameter in the model, we need to know “if I nudge this number up a little, does the loss go up or down, and by how much?” The computation graph has many inputs but funnels down to a single scalar output: the loss.

In microgpt, we implement autograd from scratch in a single class called Value. This class wraps a scalar number and tracks how it was computed. Every time you do math with Value objects (add, multiply, etc.), the result is a new Value that remembers its inputs and the local derivative of that operation.

Here’s a concrete example:

python

a = Value(2.0)

b = Value(3.0)

c = a * b # c = 6.0

L = c + a # L = 8.0

L.backward()

print(a.grad) # 4.0 (dL/da = b + 1 = 3 + 1, via both paths)

print(b.grad) # 2.0 (dL/db = a = 2)

This is the same algorithm that PyTorch’s .backward() runs, just on scalars instead of tensors. It’s significantly smaller and simpler but of course a lot less efficient.

Parameters

The parameters are the knowledge of the model. They are a large collection of floating point numbers that start out random and are iteratively optimized during training. In microgpt, we initialize them to small random numbers drawn from a Gaussian distribution.

Here’s a breakdown of the parameters:

- Embedding tables: Maps tokens to vectors.

- Attention weights: Used in the multi-head attention mechanism.

- MLP weights: Used in the feed-forward network.

- Output projection: Maps the final hidden state to vocabulary size.

In our tiny model, this comes out to 4,192 parameters. GPT-2 had 1.6 billion, and modern LLMs have hundreds of billions.

Architecture

The model architecture is a stateless function: it takes a token, a position, the parameters, and the cached keys/values from previous positions, and returns logits (scores) over what token the model thinks should come next in the sequence.

We follow GPT-2 with minor simplifications: RMSNorm instead of LayerNorm, no biases, and ReLU instead of GeLU. The model processes one token at a time, building up the KV cache as it goes.

Here’s what happens step by step:

- Embeddings: The token and position IDs look up their respective embedding tables, which are added together.

- Attention block: The current token is projected into query, key, and value vectors. The model computes attention weights and takes a weighted sum of the cached values.

- MLP block: A two-layer feed-forward network applies a learned linear transformation, ReLU activation, and another linear transformation.

- Residual connections: Both the attention and MLP blocks add their output back to their input.

- Output: The final hidden state is projected to vocabulary size, producing one logit per token.

Training Loop

The training loop repeatedly: (1) picks a document, (2) runs the model forward over its tokens, (3) computes a loss, (4) backpropagates to get gradients, and (5) updates the parameters.

Here’s a breakdown of the training loop:

- Tokenization: Each training step picks one document and wraps it with BOS on both sides.

- Forward pass and loss: We feed the tokens through the model one at a time, building up the KV cache as we go. The loss at each position is the negative log probability of the correct next token.

- Backward pass: One call to

loss.backward()runs backpropagation through the entire computation graph. - Adam optimizer: We use the Adam optimizer to update the parameters based on the corresponding gradients.

Over 1,000 steps, the loss decreases from around 3.3 (random guessing among 27 tokens) down to around 2.37. Lower is better, and the lowest possible is 0 (perfect predictions).

Inference

Once training is done, we can sample new names from the model. The parameters are frozen, and we just run the forward pass in a loop, feeding each generated token back as the next input.

We start each sample with the BOS token, which tells the model “begin a new name.” The model produces 27 logits, we convert them to probabilities, and we randomly sample one token according to those probabilities. That token gets fed back in as the next input, and we repeat until the model produces BOS again (meaning “I’m done”) or we hit the maximum sequence length.

The temperature parameter controls randomness. Before softmax, we divide the logits by the temperature. A temperature of 1.0 samples directly from the model’s learned distribution. Lower temperatures (like 0.5 here) sharpen the distribution, making the model more conservative and likely to pick its top choices.

Run It

All you need is Python (no pip install, no dependencies). The script takes about 1 minute to run on a MacBook. You’ll see the loss printed at each step, and at the end, you’ll see the generated names.

You can also try it directly on a Google Colab notebook and ask Gemini questions about it. Try playing with the script! You can try a different dataset, train for longer, or increase the size of the model to get increasingly better results.

Progression

To see the code built up piece by piece, the advised progression looks something like this:

- train0.py: Bigram count table — no neural net, no gradients.

- train1.py: MLP + manual gradients (numerical & analytic) + SGD.

- train2.py: Autograd (Value class) — replaces manual gradients.

- train3.py: Position embeddings + single-head attention + rmsnorm + residuals.

- train4.py: Multi-head attention + layer loop — full GPT architecture.

- train5.py: Adam optimizer — this is train.py.

I created a Gist called build_microgpt.py, where in the Revisions you can see all of these versions and the diffs between each step.

Real Stuff

microgpt contains the complete algorithmic essence of training and running a GPT. But between this and a production LLM like ChatGPT, there is a long list of things that change. None of them alter the core algorithm and the overall layout, but they are what makes it actually work at scale.

Here’s a breakdown of the differences:

- Data: Instead of 32K short names, production models train on trillions of tokens of internet text.

- Tokenizer: Instead of single characters, production models use subword tokenizers like BPE.

- Autograd: microgpt operates on scalar Value objects in pure Python. Production systems use tensors and run on GPUs/TPUs.

- Architecture: microgpt has 4,192 parameters. GPT-4 class models have hundreds of billions.

- Training: Instead of one document per step, production training uses large batches, gradient accumulation, mixed precision, and careful hyperparameter tuning.

- Optimization: microgpt uses Adam with a simple linear learning rate decay. At scale, optimization becomes its own discipline.

- Post-training: The base model that comes out of training is a document completer, not a chatbot. Turning it into ChatGPT happens in two stages: SFT (Supervised Fine-Tuning) and RL (Reinforcement Learning).

- Inference: Serving a model to millions of users requires its own engineering stack: batching requests together, KV cache management and paging, speculative decoding for speed, quantization, and distributing the model across multiple GPUs.

All of these are important engineering and research contributions, but if you understand microgpt, you understand the algorithmic essence.

FAQ

Does the model “understand” anything? That’s a philosophical question, but mechanically: no magic is happening. The model is a big math function that maps input tokens to a probability distribution over the next token.

Why does it work? The model has thousands of adjustable parameters, and the optimizer nudges them a tiny bit each step to make the loss go down. Over many steps, the parameters settle into values that capture the statistical regularities of the data.

How is this related to ChatGPT? ChatGPT is this same core loop (predict next token, sample, repeat) scaled up enormously, with post-training to make it conversational.

What’s the deal with “hallucinations”? The model generates tokens by sampling from a probability distribution. It has no concept of truth, it only knows what sequences are statistically plausible given the training data.

Why is it so slow? microgpt processes one scalar at a time in pure Python. A single training step takes seconds.

Can I make it generate better names? Yes. Train longer, make the model bigger, or use a larger dataset.

What if I change the dataset? The model will learn whatever patterns are in the data. Swap in a file of city names, Pokemon names, English words, or short poems, and the model will learn to generate those instead.

Tags

microgpt, GPT, Python, AI, machine learning, neural networks, autograd, tokenizer, training loop, inference, Adam optimizer, RMSNorm, ReLU, multi-head attention, KV cache, cross-entropy loss, temperature sampling, document completion, hallucination, scaling laws, SFT, RL, quantization, speculative decoding

Viral Phrases

- “200 lines of pure Python”

- “No external dependencies”

- “The beauty of microgpt”

- “Stripped down to the bare essentials”

- “A decade-long obsession”

- “The culmination of multiple projects”

- “The algorithmic essence of GPT”

- “A single-file, dependency-free implementation”

- “The core algorithm and the overall layout”

- “The statistical regularities of the data”

- “The same core loop scaled up enormously”

- “The model is a big math function”

- “The model has no concept of truth”

- “The model is a document completer”

- “The same math on a GPU processes millions of scalars in parallel”

- “The model will learn whatever patterns are in the data”

,

Leave a Reply

Want to join the discussion?Feel free to contribute!