Running AI models is turning into a memory game

The Memory Crisis: Why AI’s Next Billion-Dollar Battleground Is Already Here

The AI industry is obsessed with GPUs, but there’s a silent revolution happening in the background that could determine which companies survive the next two years. While everyone’s watching Nvidia’s stock price and speculating about the next GPU architecture, memory prices have quietly exploded—DRAM chips are now 7x more expensive than they were just 12 months ago. This isn’t just a supply chain hiccup; it’s the foundation of a new competitive arms race that’s about to reshape the entire AI landscape.

When hyperscalers like Amazon, Google, and Microsoft announce their next-generation data center builds, they’re not just buying GPUs anymore. They’re placing massive orders for DRAM that will cost billions, and the companies that figure out how to optimize this memory usage will have an enormous competitive advantage. We’re talking about the difference between staying in business and folding when your competitors can deliver the same results with 30% fewer tokens.

The complexity of this challenge became crystal clear when I dug into Anthropic’s prompt-caching documentation. Six months ago, their caching page was a simple paragraph: “Use caching, it’s cheaper.” Now it’s a dense technical manual with pricing tiers, cache-write pre-purchase options, and optimization strategies that would make a Wall Street quant’s head spin. You can buy 5-minute cache windows or 1-hour windows, and the pricing arbitrage opportunities are endless. But here’s the catch: every new piece of data you add to a query might push something else out of that precious cache window.

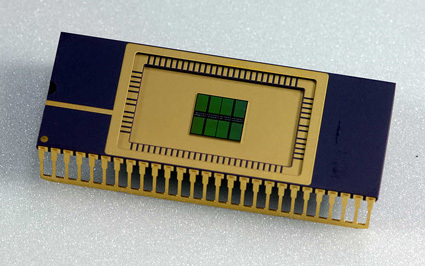

This is where the rubber meets the road for AI companies. Managing memory isn’t just about buying more chips—it’s about orchestrating an entire ecosystem of different memory types, from the expensive HBM used for training to the more affordable DRAM for inference. It’s about understanding when to use each type and how to structure your model swarms to maximize shared cache efficiency. The companies that master this orchestration will be able to deliver the same AI capabilities with significantly fewer computational resources.

The implications extend far beyond just cost savings. As memory orchestration improves, we’re going to see a democratization of AI capabilities. Applications that seem economically impossible today—real-time video analysis, complex multi-agent systems, massive language model swarms—will suddenly become viable. The companies that crack this code won’t just save money; they’ll unlock entirely new categories of AI applications that were previously constrained by memory costs.

But here’s what makes this particularly interesting: while everyone’s focused on the hardware side of this equation, there’s a massive software opportunity emerging. Companies like Tensormesh are already working on cache optimization at the infrastructure level, but the real gold mine might be in the application layer. How do you structure your prompts? How do you design your agent architectures to minimize cache misses? These are becoming the critical questions that separate the winners from the losers in AI.

The timing couldn’t be more perfect—or more dangerous. We’re at an inflection point where memory costs are skyrocketing just as AI models are becoming more efficient at processing tokens. This creates a unique window where optimization expertise can provide massive competitive advantages. But it’s also a ticking time bomb: if you don’t figure this out quickly, your competitors will, and they’ll be able to undercut you on price while delivering better performance.

What’s particularly fascinating is how this shifts the power dynamics in the AI industry. For years, the narrative has been that GPU manufacturers and model developers hold all the cards. But suddenly, memory orchestration expertise is becoming just as valuable as having the best model architecture. We’re seeing a new class of AI companies emerge—not the ones building the biggest models, but the ones figuring out how to make those models run more efficiently on existing hardware.

The next 18 months will be crucial. Companies that invest heavily in memory optimization now will have first-mover advantages that could last for years. Those that treat this as a secondary concern will find themselves at a severe competitive disadvantage, unable to compete on price or performance. It’s not enough to have the best model anymore; you need to have the best memory strategy.

And let’s be clear about what’s at stake: we’re talking about billions of dollars in infrastructure costs, potentially trillions in market capitalization for the companies that get this right. The AI companies that survive the next funding winter won’t necessarily be the ones with the most impressive demos or the biggest models. They’ll be the ones who figured out how to make every memory chip count.

This is the quiet revolution that no one’s talking about, but everyone in the industry knows is coming. The memory crisis isn’t just a technical challenge—it’s the next great competitive battleground in AI, and the companies that understand this will define the next decade of technological progress.

memory crisis, AI infrastructure, DRAM prices, prompt caching, memory orchestration, GPU optimization, AI efficiency, data center costs, token optimization, Anthropic caching, Tensormesh, HBM vs DRAM, AI competitive advantage, memory management, inference costs, model optimization, AI survival, hyperscaler strategy, cache optimization, AI economics

,

Leave a Reply

Want to join the discussion?Feel free to contribute!