Want to make the most of the new Gemma 4 AI models? RTX GPUs and PCs accelerate local AI like never before

NVIDIA RTX GPUs Unlock the Full Potential of Google’s Gemma 4 AI Models

Google’s newly launched Gemma 4 family of AI models is making waves in the local AI community, offering a powerful blend of speed, efficiency, and versatility. Designed for fast, on-device deployment, these models are optimized to run seamlessly on NVIDIA RTX-powered PCs, workstations, and even compact AI supercomputers like the NVIDIA DGX Spark and Jetson Orin Nano.

The synergy between Gemma 4 and NVIDIA RTX hardware is no coincidence—Google and NVIDIA have collaborated closely to ensure these models run at peak performance on RTX GPUs. This partnership unlocks unprecedented local AI capabilities, making RTX-powered systems the ideal platform for developers, enthusiasts, and professionals alike.

RTX PCs: The Ultimate Platform for Gemma 4 Inference

Gemma 4 models are engineered to excel in reasoning, problem-solving, code generation, debugging, and even advanced video and audio processing. They also support multi-lingual tasks, making them accessible to users worldwide. However, to truly harness their potential, you need the right hardware—and that’s where NVIDIA RTX GPUs shine.

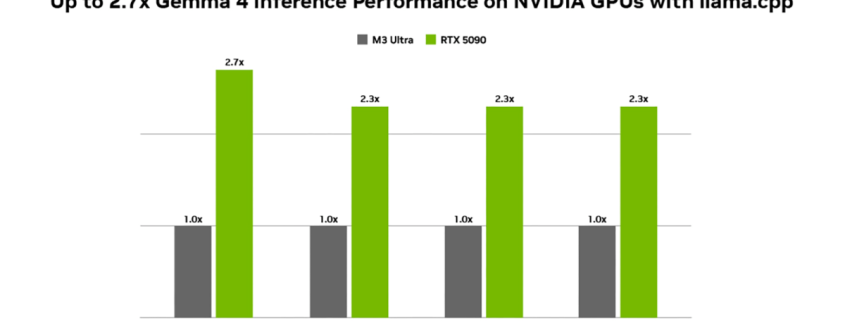

When running Gemma 4-31B on an NVIDIA RTX 5090, users can experience nearly three times the performance compared to high-end alternatives like the Apple M3 Ultra. Even smaller models, such as Gemma 4-26B-A4B and Gemma 4-E4B, see more than double the inference performance on RTX 5090 hardware. This dramatic boost in speed and efficiency is thanks to RTX’s AI-dedicated Tensor Cores, which are specifically designed to handle demanding AI workloads with minimal latency.

Gemma 4 models are fully compatible with llama.cpp and Ollama, both of which have been optimized for RTX GPUs. This ensures fast, responsive local AI performance, whether you’re running agents, automating tasks, or building custom workflows.

Accelerated Fine-Tuning for Personalized AI

One of the standout features of running AI models locally is the ability to fine-tune them with your own data. Fine-tuning allows you to retrain a model, transforming it from a general-purpose tool into a bespoke solution tailored to your specific needs. This can significantly improve response quality and align the model’s outputs with your business or personal workflows.

NVIDIA provides best-in-class support for fine-tuning through popular tools built on PyTorch and optimized for RTX GPUs. With Gemma 4 models, you get access to some of the most advanced local AI for reasoning and coding, and NVIDIA’s fine-tuning tools let you personalize it to perfection.

Ready for the Future of AI

The AI landscape is evolving at breakneck speed, and staying ahead of the curve can be challenging. The best way to ensure you’re always ready to leverage the latest advancements is to have an NVIDIA RTX GPU at your disposal. The RTX 50 Series, powered by the cutting-edge Blackwell architecture, offers ample VRAM to load Gemma 4 models and a host of other AI workloads.

These GPUs are equipped with the latest-generation AI-accelerating Tensor Cores, delivering unparalleled performance for training and inference. The CUDA-compatible toolkits provide complete control over model selection, quantization, parameter tweaking, and custom workflows, giving you the flexibility to experiment and innovate.

Memory Efficiency: A Game-Changer for Local AI

Memory optimization is critical for local AI models, as they must operate efficiently within the constraints of on-device hardware. NVIDIA has been at the forefront of memory optimization for years, pioneering the use of NVFP4, a floating-point format that reduces VRAM consumption by up to 60% on Blackwell-based GPUs.

When combined with NVIDIA’s fifth-generation Tensor Cores, this optimization enables AI acceleration to reach new heights. The latest RTX GPUs can handle complex tasks in a fraction of the time required by even high-powered alternatives, such as Apple’s latest MacBooks.

Why RTX is the Best Choice for Local AI

While cloud computing remains essential for the most capable AI models, local AI offers unique advantages that cannot be ignored. For starters, running AI locally ensures data privacy—sensitive information never leaves your system, keeping it entirely under your control. This is especially crucial for organizations and individuals handling confidential data or leveraging agentic AI to perform tasks on their PCs.

Local AI also simplifies context management. Instead of uploading massive amounts of data to the cloud, where privacy concerns and network interference can cause delays, local AI has everything it needs right at hand. Fine-tuning and follow-up tasks are also easier and more efficient.

From a cost perspective, local AI on RTX hardware allows you to manage expenses at every stage—from initial purchase to deployment and maintenance. There’s no need for cloud AI subscriptions or long-term token fees. Just supply the energy, and your NVIDIA GeForce RTX AI graphics card will handle the rest.

A Wide Range of RTX 50 Series Options

NVIDIA offers a diverse lineup of AI-capable RTX 50 Series graphics cards, ensuring there’s a solution for every need. All Blackwell-based cards are equipped with the latest-generation AI-accelerating Tensor Cores, delivering advanced AI capabilities. From flagship models like the RTX 5090 and its professional counterpart, the RTX PRO 6000, to the powerful RTX 5080, there’s a card for every level of AI development and tuning.

With NVIDIA RTX GPUs, you’re not just keeping up with the latest AI advancements—you’re setting the pace. Whether you’re a developer, enthusiast, or professional, RTX-powered systems provide the performance, efficiency, and flexibility you need to unlock the full potential of local AI.

Tags: NVIDIA RTX, Gemma 4, AI models, local AI, Tensor Cores, Blackwell architecture, NVFP4, fine-tuning, data privacy, RTX 5090, RTX 5080, RTX PRO 6000, llama.cpp, Ollama, PyTorch, CUDA, AI acceleration, inference performance, multi-lingual support, agentic AI, VRAM optimization, cloud computing, AI development, NVIDIA DGX Spark, Jetson Orin Nano.

Viral Sentences:

- “NVIDIA RTX GPUs unlock 3x faster AI performance with Gemma 4!”

- “Run Gemma 4 locally and keep your data 100% private!”

- “RTX 50 Series: The ultimate platform for cutting-edge AI!”

- “Fine-tune Gemma 4 models with NVIDIA’s best-in-class tools!”

- “Say goodbye to cloud subscriptions—RTX GPUs handle it all locally!”

- “NVIDIA’s NVFP4 cuts VRAM usage by 60%—game-changer for AI!”

- “RTX-powered systems: Ready for the future of AI today!”

- “From RTX 5090 to RTX 5080—there’s an RTX card for every AI need!”

- “Local AI on RTX: Speed, privacy, and control like never before!”

- “NVIDIA and Google: Powering the next generation of AI!”

,

Leave a Reply

Want to join the discussion?Feel free to contribute!