My first impressions on ROCm and Strix Halo

AMD Strix Halo + ROCm: First Impressions and Setup Guide

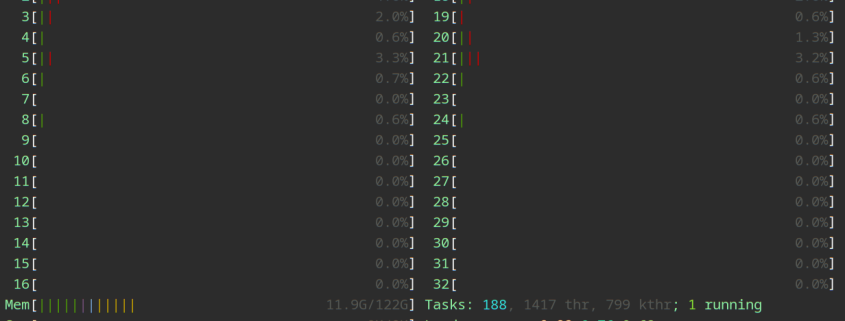

The AMD Strix Halo has arrived, and it’s not just another mobile processor—it’s a full-fledged computing powerhouse that blurs the lines between CPU and GPU capabilities. With 128GB of unified memory efficiently shared between processing units, this beast is ready to tackle everything from machine learning workloads to AI inference tasks. Here’s my deep dive into setting up ROCm (Radeon Open Compute) on this cutting-edge hardware.

Initial Setup: BIOS Configuration

Before diving into software, a BIOS update was essential. Without it, PyTorch couldn’t detect the GPU—a frustrating but easily resolved issue through the BIOS’s built-in WiFi update feature.

The real magic happens in the memory configuration. Setting reserved video memory to a minimal 512MB and enabling Graphics Translation Table (GTT) allows for dynamic memory sharing between CPU and GPU. This approach offers several advantages:

- Flexibility: The CPU can’t access GPU-reserved memory, but the GPU can utilize the entire pool (Reserved + GTT)

- Efficiency: While using both simultaneously can create fragmentation overhead, the unified approach generally outperforms static allocation

- Compatibility: Most modern applications handle this configuration well, though some legacy games might balk at seeing only 512MB of dedicated VRAM

Kernel Parameters and GRUB Configuration

To optimize memory management, I modified /etc/default/grub with these parameters:

bash

GRUB_CMDLINE_LINUX_DEFAULT=”quiet splash ttm.pages_limit=32768000 amdgpu.gttsize=114688″

After running sudo update-grub, the system was ready to leverage the full potential of shared memory architecture. The ttm.pages_limit ensures sufficient kernel memory reservation (approximately 12GB in this case) for system stability.

PyTorch Installation: The Dependency Maze

Getting PyTorch working with ROCm required navigating a complex dependency graph. The solution involved creating a pyproject.toml file with precise version specifications:

toml

[project]

name = “myproject”

version = “0.1.0”

description = “Add your description here”

readme = “README.md”

requires-python = “>=3.13”

dependencies = [

“torch==2.11.0+rocm7.2”,

“triton-rocm”,

]

[tool.uv]

environments = [“sys_platform == ‘linux'”]

[[tool.uv.index]]

name = “pytorch-rocm”

url = “https://download.pytorch.org/whl/rocm7.2”

explicit = true

[tool.uv.sources]

torch = { index = “pytorch-rocm” }

torchvision = { index = “pytorch-rocm” }

triton-rocm = { index = “pytorch-rocm” }

For convenience, I added a bash alias to streamline PyTorch activation:

bash

alias pytorch=’uvx –extra-index-url https://download.pytorch.org/whl/rocm7.2 \

–index-strategy unsafe-best-match \

–with torch==2.11.0+rocm7.2,triton-rocm \

ipython -c “import torch; print(f”ROCM: {torch.version.hip}”); \

print(f”GPU available: {torch.cuda.is_available()}”); import torch.nn as nn” -i’

AI Inference with Llama.cpp

The real excitement came when running large language models locally. Using Podman for containerization, I deployed Qwen3.6 with an impressive 327,680 token context window:

bash

podman run –rm -it –name qwen-coder –device /dev/kfd –device /dev/dri \

–security-opt label=disable –group-add keep-groups -e HSA_OVERRIDE_GFX_VERSION=11.5.0 \

-p 8080:8080 -v /some_path/models:/models:z ghcr.io/ggml-org/llama.cpp:server-rocm \

-m /models/qwen3.6/model.gguf -ngl 99 -c 327680 –host 0.0.0.0 –port 8080 \

–flash-attn on –no-mmap

The model download and conversion process was straightforward:

bash

uvx hf download Qwen/Qwen3.6-35B-A3B –local-dir /some_path/models/qwen3.6

git clone https://github.com/ggerganov/llama.cpp.git /some_path/llama.cpp

cd /some_path/models/qwen3.6 && \

uvx –extra-index-url https://download.pytorch.org/whl/rocm7.2 \

–index-strategy unsafe-best-match \

–with torch==2.11.0+rocm7.2,triton-rocm,transformers \

ipython /some_path/llama.cpp/convert_hf_to_gguf.py \

— . –outfile model.gguf

Integration with Opencode

For those using Opencode as a coding assistant, I’ve configured a seamless local model integration. The configuration JSON enables the Qwen3.6 model as a local provider with appropriate security permissions:

json

{

“$schema”: “https://opencode.ai/config.json“,

“provider”: {

“local”: {

“options”: {

“baseURL”: “http://localhost:8080/v1“,

“apiKey”: “any-string”,

“reasoningEffort”: “auto”,

“textVerbosity”: “high”,

“supportsToolCalls”: true

},

“models”: {

“qwen-coder-local”: {}

}

}

},

“model”: “local/qwen-coder-local”,

“permission”: {

““: “ask”,

“read”: {

““: “allow”,

“*.env”: “deny”,

“/secrets/“: “deny”

},

“bash”: “allow”,

“edit”: “allow”,

“glob”: “allow”,

“grep”: “allow”,

“websearch”: “allow”,

“codesearch”: “allow”,

“webfetch”: “allow”

},

“disabled_providers”: [

“opencode”

]

}

First Impressions: The Verdict

After weeks of experimentation, my assessment is clear: the AMD Strix Halo with ROCm support delivers exceptional performance that justifies the setup complexity. The ability to run PyTorch workloads and deploy massive language models locally represents a significant leap forward for local AI development.

The “rough edges” mentioned earlier primarily relate to the learning curve associated with ROCm’s unique architecture and dependency management. However, once configured properly, the system provides a stable, high-performance environment that rivals dedicated GPU setups.

For developers and researchers looking to push the boundaries of local AI inference without breaking the bank on enterprise hardware, the Strix Halo with ROCm represents an exciting frontier worth exploring.

Tags: AMD Strix Halo, ROCm, unified memory, GPU computing, machine learning, AI inference, PyTorch, Llama.cpp, Qwen3.6, local AI, memory sharing, GTT, BIOS configuration, kernel parameters

Viral Phrases: “128GB of shared memory magic,” “ROCm revolution is here,” “local AI without the enterprise price tag,” “the future of computing is unified,” “breaking barriers between CPU and GPU,” “AI inference at your fingertips,” “the beast awakens: Strix Halo unleashed,” “memory management mastery,” “containerized AI made simple,” “open source AI dominance”

,

Leave a Reply

Want to join the discussion?Feel free to contribute!